Journal of South China University of Technology(Natural Science Edition) ›› 2023, Vol. 51 ›› Issue (5): 13-23.doi: 10.12141/j.issn.1000-565X.220684

Special Issue: 2023年计算机科学与技术

• Computer Science & Technology • Previous Articles Next Articles

Contrastive Knowledge Distillation Method Based on Feature Space Embedding

YE Feng CHEN Biao LAI Yizong

- School of Mechanical and Automotive Engineering,South China University of Technology,Guangzhou 510640,Guangdong,China

-

Received:2022-10-24Online:2023-05-25Published:2023-01-16 -

Contact:叶峰(1972-),男,博士,副教授,主要从事机器视觉及移动机器人传感控制研究。 E-mail:mefengye@scut.edu.cn -

About author:叶峰(1972-),男,博士,副教授,主要从事机器视觉及移动机器人传感控制研究。 -

Supported by:the Key-Area R&D Program of Guangdong Province(2021B0101420003)

CLC Number:

Cite this article

YE Feng, CHEN Biao, LAI Yizong. Contrastive Knowledge Distillation Method Based on Feature Space Embedding[J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(5): 13-23.

share this article

Table 2

Comparison of performance among CE algorithm and FSECD algorithms with class-level policy and instance-level policy on CIFAR-100 dataset"

| 算法 | 教师+学生模型师生对 | Acc1/% | ||

|---|---|---|---|---|

| B=128 | B=512 | B=1 024 | ||

| CE | WRN-40-2+WRN-40-1 | 73.26 | 69.75 | 68.11 |

| ResNet32×4+ResNet8×4 | 72.50 | 70.63 | 69.14 | |

| 类别级策略的FSECD | WRN-40-2+WRN-40-1 | 73.37 | 71.52 | 70.33 |

| ResNet32×4+ResNet8×4 | 75.74 | 72.94 | 71.24 | |

| 实例级策略的FSECD | WRN-40-2+WRN-40-1 | 74.49 | 74.39 | 73.26 |

| ResNet32×4+ResNet8×4 | 76.57 | 75.58 | 74.58 | |

Table 3

Comparison of Acc1 among six models with the same network architecture trained by nine knowledge distillation algorithms on CIFAR-100 dataset"

| 算法 | ResNet56+ResNet20 | ResNet110+ResNet32 | ResNet32×4+ResNet8×4 | WRN-40-2+WRN-16-2 | WRN-40-2+WRN-40-1 | VGG13+VGG8 |

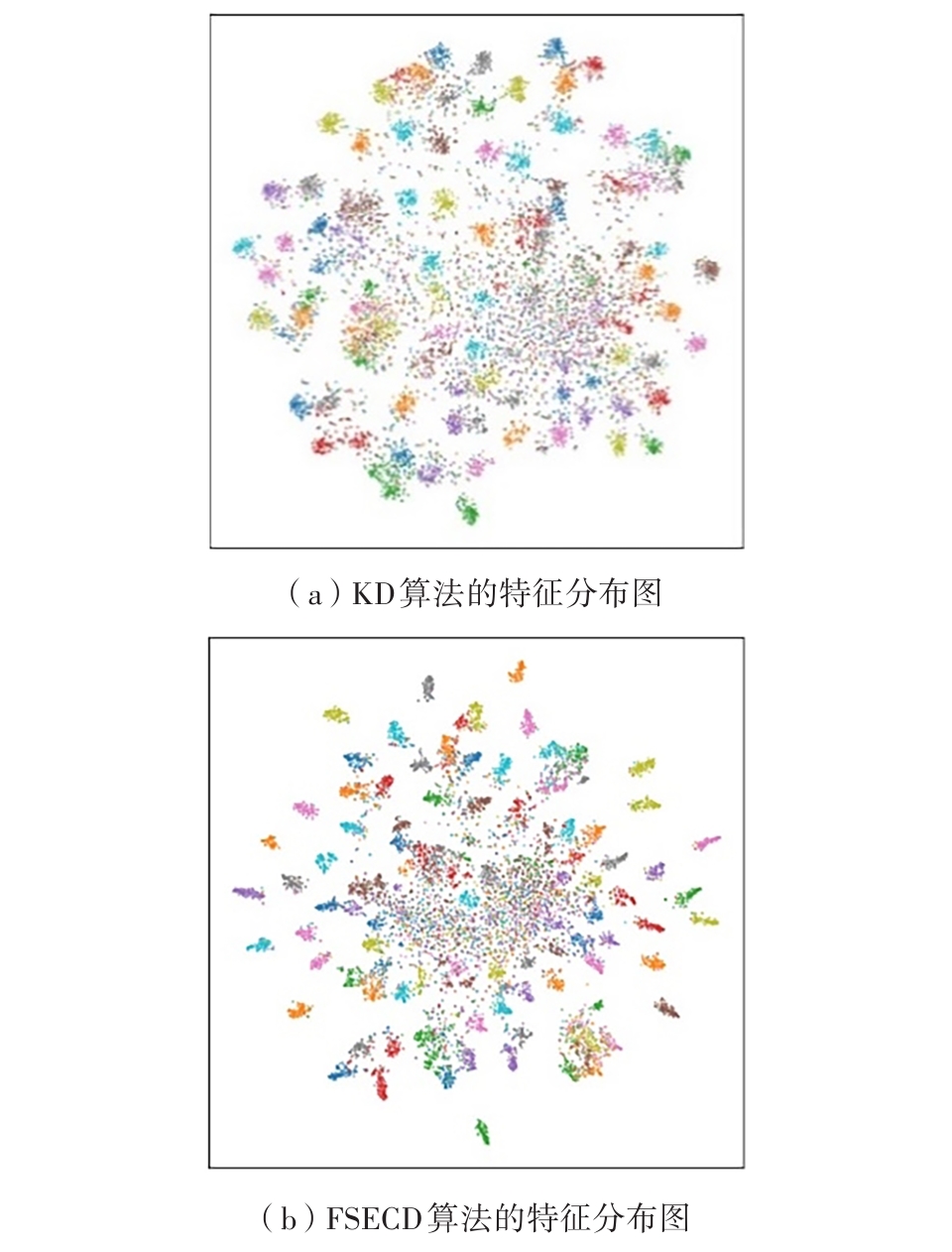

|---|---|---|---|---|---|---|

| CE | 69.06 | 71.14 | 72.51 | 73.26 | 71.98 | 70.36 |

| KD | 70.66 | 73.08 | 73.33 | 74.92 | 73.54 | 72.98 |

| FitNet | 69.21 | 71.06 | 73.50 | 73.58 | 72.24 | 71.02 |

| AT | 70.55 | 72.31 | 73.44 | 74.08 | 72.77 | 71.43 |

| RKD | 69.61 | 71.82 | 71.90 | 73.35 | 72.22 | 71.48 |

| OFD | 70.98 | 73.23 | 74.95 | 75.24 | 74.33 | 73.95 |

| CRD | 71.16 | 73.48 | 75.51 | 75.48 | 74.14 | 73.94 |

| DKD1) | 71.32 | 73.77 | 75.92 | 75.32 | 74.14 | 74.41 |

| FSECD | 71.39 | 73.51 | 76.57 | 75.62 | 74.49 | 74.11 |

Table 4

Comparison of Acc1 among five models with different network architectures trained by nine knowledge distillation algorithms on CIFAR-100 dataset"

| 算法 | ResNet32×4+ShuffleNetV1 | WRN-40-2+ShuffleNetV1 | VGG13+MobileNetV2 | ResNet50+MobileNetV2 | ResNet32×4+ShuffleNetV2 |

|---|---|---|---|---|---|

| CE | 70.50 | 70.50 | 64.60 | 64.60 | 71.82 |

| KD | 74.07 | 74.83 | 67.37 | 67.35 | 74.45 |

| FitNet | 73.59 | 73.73 | 64.14 | 63.16 | 73.54 |

| AT | 71.73 | 73.32 | 59.40 | 58.58 | 72.73 |

| RKD | 72.28 | 72.21 | 64.52 | 64.43 | 73.21 |

| OFD | 75.98 | 75.85 | 69.48 | 69.04 | 76.82 |

| CRD | 75.11 | 76.05 | 69.73 | 69.11 | 75.65 |

| DKD1) | 76.45 | 76.67 | 69.29 | 69.96 | 76.70 |

| FSECD | 76.01 | 76.32 | 69.97 | 70.06 | 76.15 |

Table 5

Comparison of Acc1 and Acc5 between two network models trained by seven knowledge distillation algorithms on ImageNet dataset"

| 算法 | Acc1/% | Acc5/% | ||

|---|---|---|---|---|

| ResNet34+ResNet18 | ResNet50+MobileNetvV1 | ResNet34+ResNet18 | ResNet50+MobileNetV1 | |

| CE | 69.75 | 68.87 | 89.07 | 88.76 |

| KD | 71.03 | 70.50 | 90.05 | 89.80 |

| AT | 70.69 | 69.56 | 90.01 | 89.33 |

| CRD | 71.17 | 71.37 | 90.13 | 90.41 |

| OFD | 70.81 | 71.25 | 89.98 | 90.34 |

| DKD1) | 71.54 | 72.01 | 90.43 | 90.02 |

| FSECD | 71.49 | 72.19 | 90.44 | 90.98 |

| 1 | SIMONYAN K, ZISSERMAN A .Very deep convolutional networks for large-scale image recognition [EB/OL].(2015-04-10)[2022-10-20].. |

| 2 | HE K, ZHANG X, REN S,et al .Deep residual learning for image recognition[C]∥ Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:770-778. |

| 3 | ZHANG X, ZHOU X, LIN M,et al .ShuffleNet:an extremely efficient convolutional neural network for mobile devices[C]∥ Proceedings of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:6848-6856. |

| 4 | MA N, ZHANG X, ZHENG H-T,et al .ShuffleNet V2:practical guidelines for efficient CNN architecture design[C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:122-138. |

| 5 | SANDLER M, HOWARD A, ZHU M,et al .MobileNetV2:inverted residuals and linear bottlenecks [C]∥ Proceedings of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:4510-4520. |

| 6 | ZAGORUYKO S, KOMODAKIS N .Wide residual networks[EB/OL].(2017-06-14)[2022-10-20].. |

| 7 | REDMON J, DIVVALA S, GIRSHICK R,et al .You only look once:unified,real-time object detection[C]∥ Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:779-788. |

| 8 | LIU W, ANGUELOV D, ERHAN D,et al .SSD:single shot multibox detector[C]∥ Proceedings of the 14th European Conference on Computer Vision.Amsterdam:Springer,2016:21-37. |

| 9 | HE K, GKIOXARI G, DOLLÁR P,et al .Mask R-CNN[C]∥ Proceedings of 2017 IEEE International Conference on Computer Vision.Venice:IEEE,2017:2961-2969. |

| 10 | ZHAO H, SHI J, QI X,et al .Pyramid scene parsing network[C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:2881-2890. |

| 11 | LUO J-H, WU J, LIN W .ThiNet:a filter level pruning method for deep neural network compression[C]∥ Proceedings of 2017 IEEE International Conference on Computer Vision.Venice:IEEE,2017:5058-5066. |

| 12 | JACOB B, KLIGYS S, CHEN B,et al .Quantization and training of neural networks for efficient integer-arithmetic-only inference[C]∥ Proceedings of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:2704-2713. |

| 13 | YU X, LIU T, WANG X,et al .On compressing deep models by low rank and sparse decomposition[C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:7370-7379. |

| 14 | HINTON G, VINYALS O, DEAN J .Distilling the knowledge in a neural network[EB/OL].(2015-05-09)[2022-10-20].. |

| 15 | PARK W, KIM D, LU Y,et al .Relational knowledge distillation[C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:3967-3976. |

| 16 | TIAN Y, KRISHNAN D, ISOLA P .Contrastive representation distillation[C]∥ Proceedings of the 8th International Conference on Learning Representations.Addis Ababa:OpenReview.net,2020:1-19. |

| 17 | ROMERO A, BALLAS N, KAHOU S E,et al .FitNets:hints for thin deep nets[C]∥ Proceedings of the 3rd International Conference on Learning Representations.San Diego:OpenReview.net,2015:1-13. |

| 18 | ZAGORUYKO S, KOMODAKIS N .Paying more attention to attention:improving the performance of convolutional neural networks via attention transfer[C]∥ Proceedings of the 5th International Conference on Learning Representations.Toulon:OpenReview.net,2017:1-13. |

| 19 | HEO B, KIM J, YUN S,et al .A comprehensive overhaul of feature distillation[C]∥ Proceedings of 2019 IEEE/CVF International Conference on Computer Vision.Long Beach:IEEE,2019:1921-1930. |

| 20 | GOU J, YU B, MAYBANK S J,et al .Knowledge distillation:a survey[J].International Journal of Computer Vision,2021,129(6):1789-1819. |

| 21 | ZHAO B, CUI Q, SONG R,et al .Decoupled knowledge distillation[C]∥ Proceedings of 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition.New Orleans:IEEE,2022:11953-11962. |

| 22 | GOU J, SUN L, YU B,et al .Multi-level attention-based sample correlations for knowledge distillation[J].IEEE Transactions on Industrial Informatics,2022,DOI:10.1109/TII.2022.3209672 . |

| 23 | CHEN T, KORNBLITH S, NOROUZI M,et al .A simple framework for contrastive learning of visual representations[C]∥ Proceedings of the Thirty-seventh International Conference on Machine Learning.Vienna:IMLS,2020:1597-1607. |

| 24 | RADFORD A, KIM J W, HALLACY C,et al .Learning transferable visual models from natural language supervision[C]∥ Proceedings of the 38th International Conference on Machine Learning.Vienna:IMLS,2021:8748-8763. |

| 25 | XU G, LIU Z, LI X,et al .Knowledge distillation meets self-supervision[C]∥ Proceedings of the 16th European Conference on Computer Vision.Glasgow:Springer,2020:588-604. |

| 26 | KRIZHEVSKY A .Learning multiple layers of features from tiny images[D].Toronto:University of Toronto,2009. |

| 27 | DENG J, DONG W, SOCHER R,et al .ImageNet:a large-scale hierarchical image database[C]∥ Proceedings of 2009 IEEE Conference on Computer Vision and Pattern Recognition.Miami:IEEE,2009:248-255. |

| 28 | HOWARD A G, ZHU M, CHEN B,et al .MobileNets:efficient convolutional neural networks for mobile vision applications[EB/OL].(2017-04-17)[2022-10-20].. |

| 29 | Van der MAATEN L, HINTON G .Visualizing data using t-SNE[J].Journal of Machine Learning Research,2008,9(11):2579-2605. |

| [1] | . Feature-domain Proximal High-Dimensional Gradient Descent Network for Image Compressed Sensing [J]. Journal of South China University of Technology(Natural Science Edition), 2024, 52(3): 119-130. |

| [2] | ZHENG Juanyi, DONG Jiahao, ZHANG Qingjue, et al. Reconfigurable Intelligence Surface Channel Estimation Algorithm Based on RDN [J]. Journal of South China University of Technology(Natural Science Edition), 2024, 52(3): 102-111. |

| [3] | . Research on Forest Fire Recognition Based on Improved EfficientNet-E Model Based on ECA Attention Mechanism [J]. Journal of South China University of Technology(Natural Science Edition), 2024, 52(2): 42-49. |

| [4] | LI Fang, GUO Weisen, ZHANG Ping, et al.. Prediction Technique for Remaining Useful Life of Bearing Based on Spatial-Temporal Dual Cell State [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(9): 69-81. |

| [5] | SU Jindian, YU Shanshan, HONG Xiaobin. A Self-Supervised Pre-Training Method for Chinese Spelling Correction [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(9): 90-98. |

| [6] | LI Jiachun, LI Bowen, LIN Weiwei. AdfNet: An Adaptive Deep Forgery Detection Network Based on Diverse Features [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(9): 82-89. |

| [7] | MA Xiaoliang, AN Lingling, DENG Congjian, et al. Translation Optimization Technology of Automatic Speech Recognition Based on Industry-Specific Vocabulary [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(8): 118-125. |

| [8] | GUO Enqiang, FU Xinsha. Dropped Object Detection Method Based on Feature Similarity Learning [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(6): 30-41. |

| [9] | ZHAO Jiandong, JIAO Lanxin, ZHAO Zhimin, et al. A Car-Following Model Driven by Combination of Theory and Data Considering Effects of Lane Change of Side Cars [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(6): 10-19. |

| [10] | ZHU Zhengyu, LUO Chao, HE Qianhua, et al. Multi-View Lip Motion and Voice Consistency Judgment Based on Lip Reconstruction and Three-Dimensional Coupled CNN [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(5): 70-77. |

| [11] | ZHAO Rongchao, WU Baili, CHEN Zhuyun, WEN Kairu, ZHANG Shaohui, LI Weihua. Graph Neural Network for Fault Diagnosis with Multi-Scale Time-Spatial Information Fusion Mechanism [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(12): 42-52. |

| [12] | LUO Yutao, GAO Qiang. Traffic Sign Detection Based on Channel Attention and Feature Enhancement [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(12): 64-72. |

| [13] | HOU Liwei, WANG Hengsheng, ZOU Haoran. Deep Learning-Based Prediction of Contact Force in the Process of Shoveling Up Glass Subtrate [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(8): 71-81. |

| [14] | MO Jianwen, ZHU Yanqiao, YUAN Hua, et al. Incremental learning based on neuron regularization and resource releasing [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(6): 71-79,90. |

| [15] | LU Lu, ZHONG Wenyu, WU Xiaokun. Image tampering localization based on mutil-scale transformer [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(6): 10-18. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||