华南理工大学学报(自然科学版) ›› 2024, Vol. 52 ›› Issue (6): 110-119.doi: 10.12141/j.issn.1000-565X.230105

所属专题: 2024年计算机科学与技术

基于多尺度时空特征和篡改概率改善换脸检测的跨库性能

胡永健1( ), 卓思超1, 刘琲贝1(

), 卓思超1, 刘琲贝1( ), 王宇飞2, 李纪成1

), 王宇飞2, 李纪成1

- 1.华南理工大学 电子与信息工程学院,广东 广州 510640

2.广东警官学院 刑事技术学院,广东 广州 510440

Improvement of Cross-Dataset Performance of Face Forgery Detection Based on Multi-Scale Spatiotemporal Features and Tampering Probabilities

HU Yongjian1( ), ZHUO Sichao1, LIU Beibei1(

), ZHUO Sichao1, LIU Beibei1( ), WANG Yufei2, LI Jicheng1

), WANG Yufei2, LI Jicheng1

- 1.School of Electronic and Information Engineering,South China University of Technology,Guangzhou 510640,Guangdong,China

2.School of Criminal Science and Technology,Guangdong Police College,Guangzhou 510440,Guangdong,China

摘要:

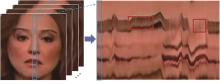

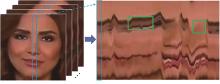

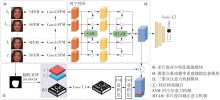

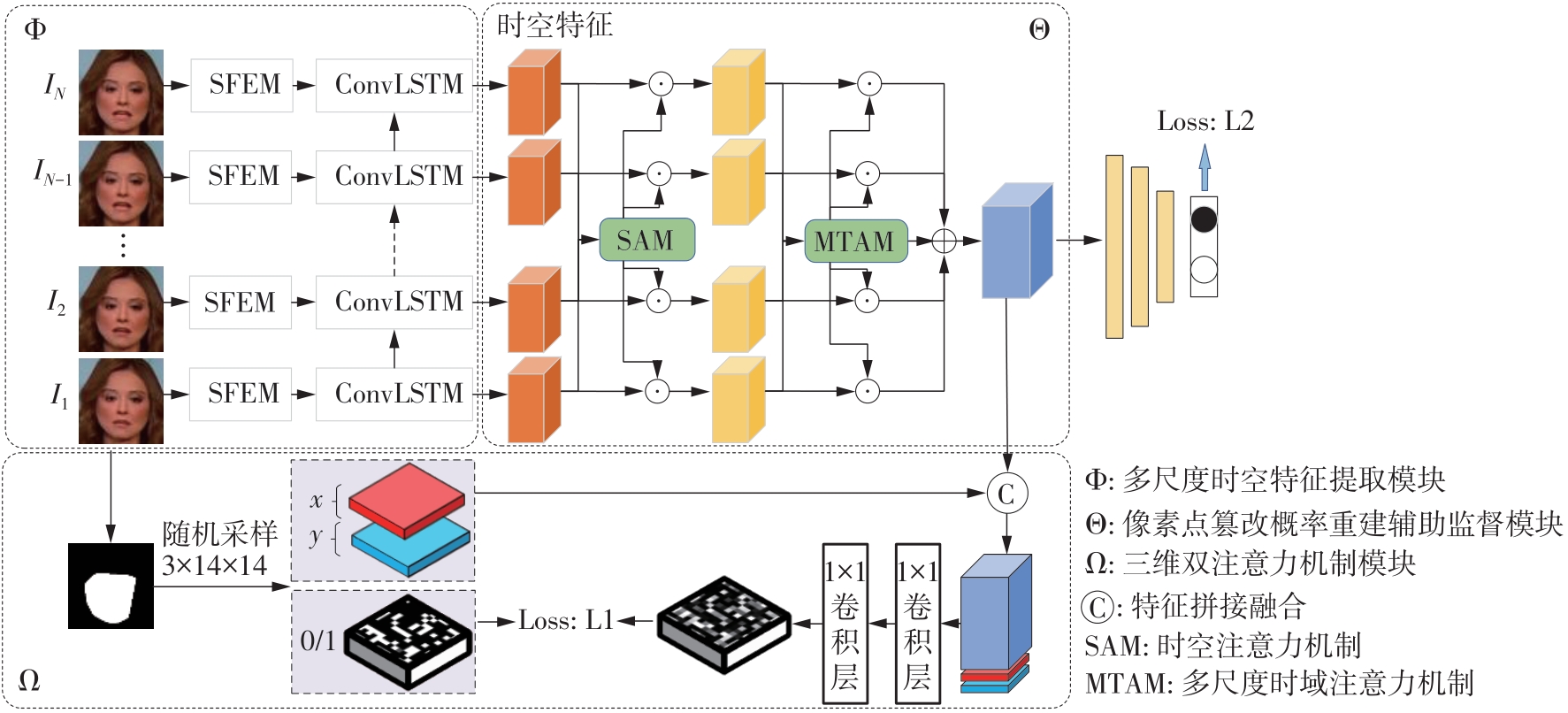

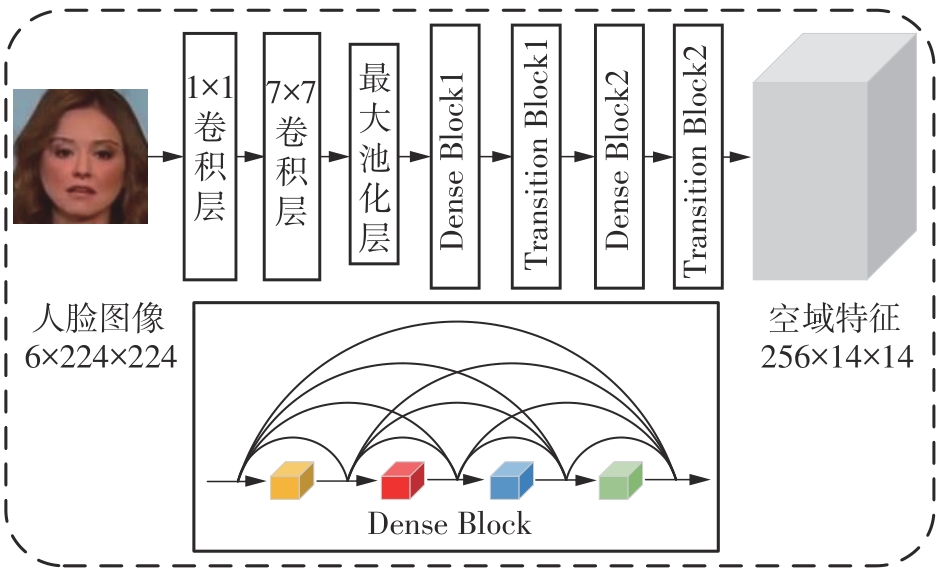

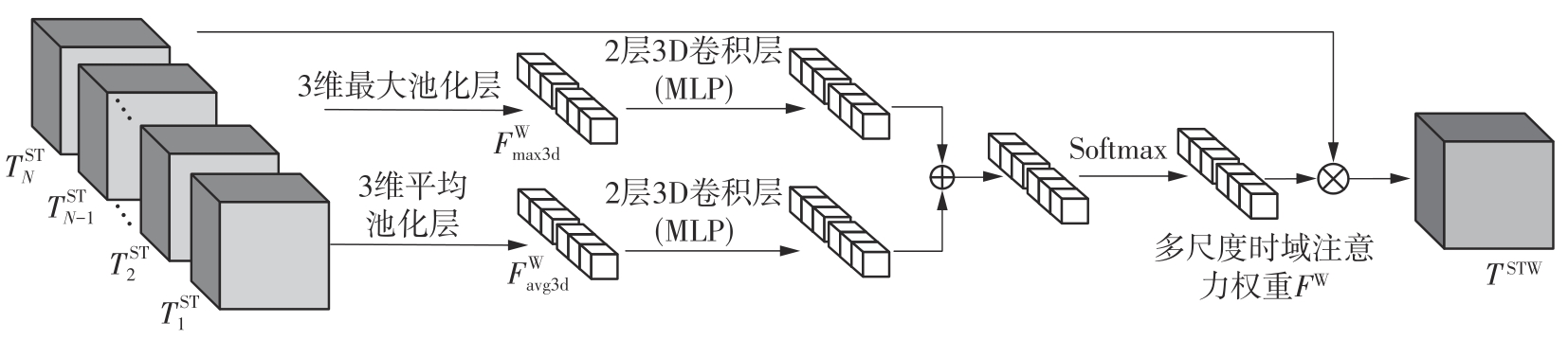

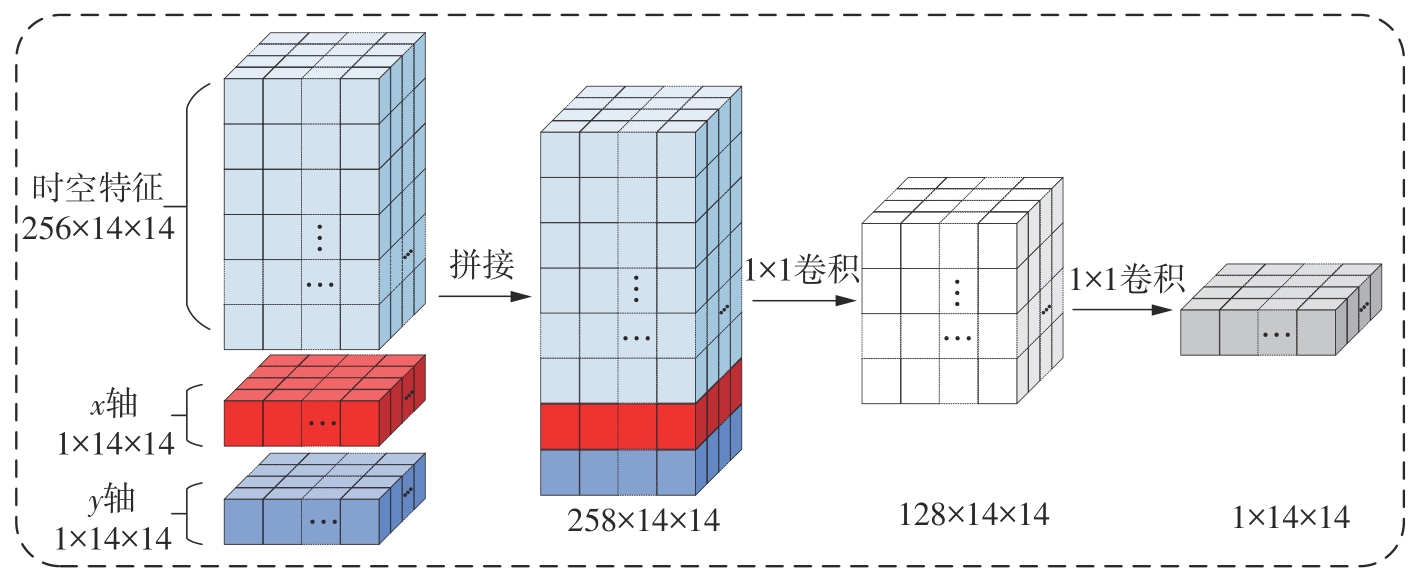

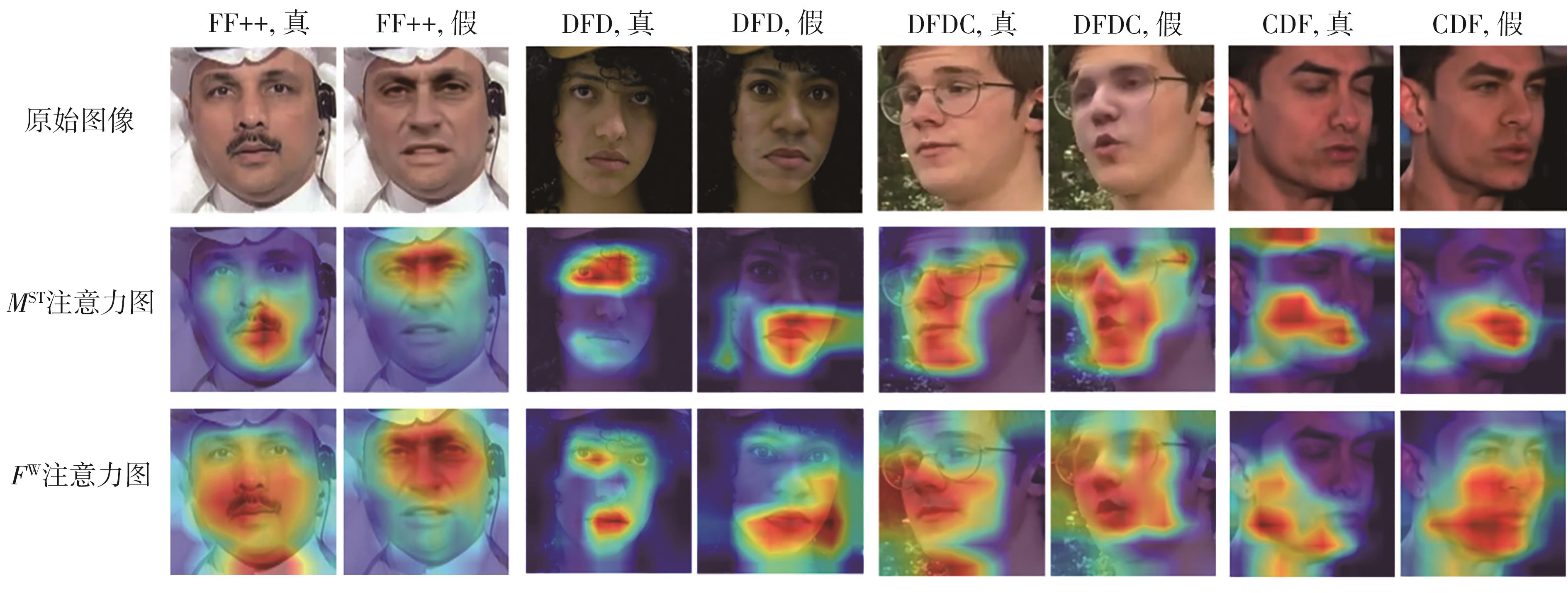

目前大多DeepFake换脸检测算法过于依赖局部特征,尽管库内检测性能尚佳,但容易出现过拟合,导致跨库检测性能不理想,即泛化性能不够好。有鉴于此,文中提出一种基于多尺度时空特征和篡改概率的换脸视频检测算法,目的是利用假脸视频中广泛存在的帧间时域不连续性缺陷来解决现有检测算法在跨库、跨伪造方式和视频压缩时性能明显下降的问题,改善泛化检测能力。该算法包括3个模块:为检测假脸视频在时域上留下的不连续痕迹,设计了一个多尺度时空特征提取模块;为自适应计算多尺度时空特征之间的时空域关联性,设计了一个三维双注意力机制模块;为预测随机选取的像素点的篡改概率和构造监督掩膜,设计了一个辅助监督模块。将所提出的算法在FF++、DFD、DFDC、CDF等公开大型标准数据库中进行实验,并与基线算法和近期发布的同类算法进行对比。结果显示:文中算法在保持库内平均检测性能优良的同时,跨库检测和抗视频压缩时的综合性能最好,跨伪造方法检测时的综合性能中等偏上。实验结果验证了文中算法的有效性。

中图分类号: