Journal of South China University of Technology(Natural Science Edition) ›› 2024, Vol. 52 ›› Issue (7): 62-71.doi: 10.12141/j.issn.1000-565X.230313

• Electronics, Communication & Automation Technology • Previous Articles Next Articles

Attention Module Based on Feature Similarity and Feature Normalization

DU Qiliang1,2,3( ), WANG Yimin1, TIAN Lianfang1,4,5

), WANG Yimin1, TIAN Lianfang1,4,5

- 1.School of Automation Science and Engineering,South China University of Technology,Guangzhou 510640,Guangdong,China

2.China-Singapore International Joint Research Institute,South China University of Technology,Guangzhou 510555,Guangdong,China

3.Key Laboratory of Autonomous Systems and Network Control of the Ministry of Education,South China University of Technology,Guangzhou 510640,Guangdong,China

4.Research Institute of Modern Industrial Innovation,South China University of Technology,Zhuhai 519170,Guangdong,China

5.Engineering Center of Guangdong Development and Reform Commission,South China University of Technology,Guangzhou 510031,Guangdong,China

-

Received:2023-05-10Online:2024-07-25Published:2023-12-22 -

About author:杜启亮(1980—),男,博士,副研究员,主要从事模式识别与机器视觉研究。E-mail: qldu@scut.edu.cn -

Supported by:the Key-Area Research and Development Program of Guangdong Province(2020B1111010002)

CLC Number:

Cite this article

DU Qiliang, WANG Yimin, TIAN Lianfang. Attention Module Based on Feature Similarity and Feature Normalization[J]. Journal of South China University of Technology(Natural Science Edition), 2024, 52(7): 62-71.

share this article

Table 1

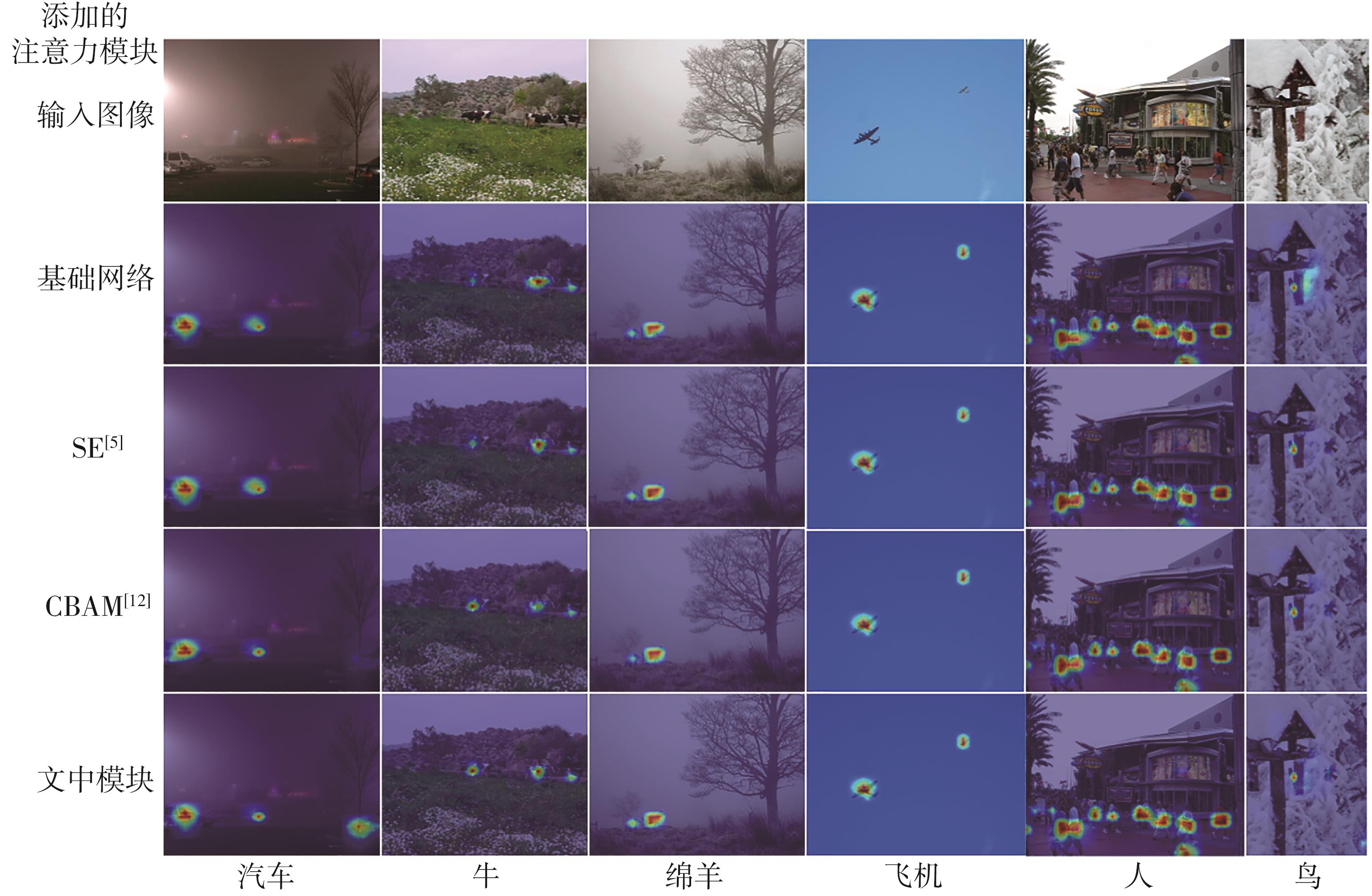

Top-1 accuracy of different attention modules on CIFAR10 datasets in different backbone networks"

| 骨干网络 | 添加不同注意力模块后的Top-1精度/% | |||||

|---|---|---|---|---|---|---|

| 基础网络 | SE[ | CBAM[ | ECA[ | SimAM[ | 文中模块 | |

| ResNet-18[ | 92.41 | 92.63 | 92.67 | 92.54 | 92.72 | 92.86 |

| PreResNet-18[ | 92.37 | 92.49 | 92.58 | 92.72 | 92.37 | 92.69 |

| ResNet-34[ | 93.10 | 93.42 | 93.53 | 93.62 | 93.53 | 93.65 |

| PreResNet-34[ | 93.07 | 93.34 | 93.49 | 93.33 | 93.28 | 93.58 |

| ResNet-50[ | 93.77 | 93.70 | 93.78 | 93.91 | 93.88 | 94.02 |

| PreResNet-50[ | 94.10 | 94.05 | 94.13 | 94.49 | 94.29 | 94.58 |

Table 2

Top-1 accuracy of different attention modules on CIFAR100 datasets in different backbone networks"

| 骨干网络 | 添加不同注意力模块后的Top-1精度/% | |||||

|---|---|---|---|---|---|---|

| 基础网络 | SE[ | CBAM[ | ECA[ | SimAM[ | 文中模块 | |

| ResNet-18[ | 68.87 | 69.68 | 69.69 | 69.03 | 69.99 | 70.21 |

| PreResNet-18[ | 68.69 | 69.21 | 69.32 | 69.91 | 69.54 | 69.59 |

| ResNet-34[ | 71.14 | 71.48 | 71.56 | 71.39 | 71.34 | 71.85 |

| PreResNet-34[ | 70.65 | 71.12 | 70.72 | 71.12 | 71.08 | 71.15 |

| ResNet-50[ | 72.80 | 73.30 | 73.28 | 73.48 | 73.19 | 73.61 |

| PreResNet-50[ | 73.10 | 74.16 | 73.94 | 73.63 | 74.13 | 74.01 |

Table 3

Model complexity and speed of the convolutional neural network with different attention modules"

添加的 注意力模块 | 参数量/106 | 浮点运算量/106 | 推理速度/(帧·s-1) | |||

|---|---|---|---|---|---|---|

| ResNet-18[ | ResNet-50[ | ResNet-18[ | ResNet-50[ | ResNet-18[ | ResNet-50[ | |

| 基础网络 | 11.181 6 | 23.528 5 | 37.220 4 | 84.342 8 | 439 | 210 |

| SE[ | 11.268 7 | 26.043 5 | 37.324 7 | 86.985 5 | 310 | 139 |

| CBAM[ | 11.271 5 | 26.061 1 | 37.411 8 | 89.401 5 | 182 | 77 |

| ECA[ | 11.181 7 | 23.528 6 | 37.243 4 | 84.528 6 | 297 | 133 |

| SimAM[ | 11.181 6 | 23.528 5 | 37.220 4 | 84.342 8 | 288 | 131 |

| 文中模块 | 11.181 7 | 23.528 6 | 37.228 0 | 84.399 1 | 152 | 72 |

| 1 | DENG J, DONG W, SOCHER R,et al .Imagenet:a large-scale hierarchical image database[C]∥Proceedings of 2009 IEEE Conference on Computer Vision and Pattern Recognition.Miami:IEEE,2009:248-255. |

| 2 | LIN T Y, MAIRE M, BELONGIE S,et al .Microsoft COCO:common objects in context[C]∥Proceedings of the 13th European Conference on Computer Vision.Zurich:Springer,2014:740-755. |

| 3 | HE K, ZHANG X, REN S,et al .Deep residual learning for image recognition[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:770-778. |

| 4 | HUANG G, LIU Z, VAN DER MAATEN L,et al .Densely connected convolutional networks[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:4700-4708. |

| 5 | HU J, SHEN L, SUN G .Squeeze-and-excitation networks[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:7132-7141. |

| 6 | DAI T, CAI J, ZHANG Y,et al .Second-order attention network for single image super-resolution[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:11065-11074. |

| 7 | ZHAO H, KONG X, HE J,et al .Efficient image super-resolution using pixel attention[C]∥Proceedings of the European Conference on Computer Vision.Glasgow:Springer,2020:56-72. |

| 8 | MNIH V, HEESS N, GRAVES A .Recurrent models of visual attention[J].Advances in Neural Information Processing Systems,2014,2(12):2204-2212. |

| 9 | BA J, MNIH V, KAVUKCUOGLU K .Multiple object recognition with visual attention[EB/OL].(2015-04-23)[2023-05-10].. |

| 10 | XU K, BA J, KIROS R,et al .Show,attend and tell:neural image caption generation with visual attention[C]∥Proceedings of the International Conference on Machine Learning.Lille:PMLR,2015:2048-2057. |

| 11 | GREGOR K, DANIHELKA I, GRAVES A,et al .DRAW:a recurrent neural network for image generation[C]∥Proceedings of the International Conference on Machine Learning.Lille:PMLR,2015:1462-1471. |

| 12 | WOO S, PARK J, LEE J Y,et al .CBAM:convolutional block attention module[C]∥Proceedings of the European Conference on Computer Vision.Munich:Springer,2018:3-19. |

| 13 | WANG X, GIRSHICK R, GUPTA A,et al .Non-local neural networks[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:7794-7803. |

| 14 | WANG Q, WU B, ZHU P,et al .ECA-Net:efficient channel attention for deep convolutional neural networks[C]∥Proceedings of the IEEE/CVF Confe-rence on Computer Vision and Pattern Recognition.Seatle:IEEE,2020:11534-11542. |

| 15 | PARK J,WOO S, LEE J Y,et al .BAM:bottleneck attention module[EB/OL].(2018-07-18)[2023-05-10].. |

| 16 | VASWANI A, SHAZEER N, PARMAR N,et al .Attention is all you need[J].Advances in Neural Information Processing Systems,2017,31(17):6000-6010. |

| 17 | LIU Z, LIN Y, CAO Y,et al .Swin transformer:hie-rarchical vision transformer using shifted windows[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision.Montreal:IEEE,2021:10012-10022. |

| 18 | IOFFE S, SZEGEDY C .Batch normalization:acce-lerating deep network training by reducing internal covariate shift[C]∥Proceedings of the International Conference on Machine Learning.Lille:PMLR,2015:448-456. |

| 19 | LI X, SUN W, WU T .Attentive normalization[C]∥Proceedings of the European Conference on Computer Vision.Glasgow:Springer,2020:70-87. |

| 20 | YAO M, ZHAO G, ZHANG H,et al .Attention spiking neural networks[J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2023,45(8):9393-9410. |

| 21 | YANG L, ZHANG R Y, LI L,et al .SimAM:a simple,parameter-free attention module for convolutional neural networks[C]∥Proceedings of the International Conference on Machine Learning.Graz:PMLR,2021:11863-11874. |

| 22 | WEBB B S, DHRUV N T, SOLOMON S G,et al .Early and late mechanisms of surround suppression in striate cortex of macaque[J].Journal of Neuroscience,2005,25(50):11666-11675. |

| 23 | TAN S, ZHANG L, SHU X,et al .A feature-wise attention module based on the difference with surrounding features for convolutional neural networks[J].Frontiers of Computer Science,2023,17(6):338-348. |

| 24 | HE K, ZHANG X, REN S,et al .Identity mappings in deep residual networks[C]∥Proceedings of the 14th European Conference on Computer Vision.Amsterdam:Springer,2016:630-645. |

| 25 | EVERINGHAM M, ESLAMI S M A, VAN GOOL L,et al .The pascal visual object classes challenge:a retrospective[J].International Journal of Computer Vision,2015,111(1):98-136. |

| 26 | BOCHKOVSKIY A, WANG C Y, LIAO H Y M .Yolov4:optimal speed and accuracy of object detection[EB/OL].(2020-04-23)[2023-05-10].. |

| 27 | HARIHARAN B, ARBELAEZ P, BOURDEV L,et al .Semantic contours from inverse detectors[C]∥ Proceedings of the 2011 International Conference on Computer Vision.Barcelona:IEEE,2011:991-998. |

| 28 | LOSHCHILOV I, HUTTER F .SGDR:stochastic gradient descent with warm restarts[EB/OL].(2017-03-03)[2023-05-10].. |

| 29 | WANG J, SUN K, CHENG T,et al .Deep high-resolution representation learning for visual recognition[J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2021,43(10):3349-3364. |

| [1] | LIU Weirong, ZHANG Zhiqiang, ZHANG Ning, MENG Jiahao, ZHANG Min, LIU Jie. A TT-Tucker Decomposition-Based LC Convolutional Neural Network Compression Method Without Pre-Training [J]. Journal of South China University of Technology(Natural Science Edition), 2024, 52(7): 29-38. |

| [2] | MA Xiaoliang, AN Lingling, DENG Congjian, et al. Translation Optimization Technology of Automatic Speech Recognition Based on Industry-Specific Vocabulary [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(8): 118-125. |

| [3] | GUO Enqiang, FU Xinsha. Dropped Object Detection Method Based on Feature Similarity Learning [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(6): 30-41. |

| [4] | ZHU Zhengyu, LUO Chao, HE Qianhua, et al. Multi-View Lip Motion and Voice Consistency Judgment Based on Lip Reconstruction and Three-Dimensional Coupled CNN [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(5): 70-77. |

| [5] | YE Feng, CHEN Biao, LAI Yizong. Contrastive Knowledge Distillation Method Based on Feature Space Embedding [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(5): 13-23. |

| [6] | LUO Yutao, GAO Qiang. Traffic Sign Detection Based on Channel Attention and Feature Enhancement [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(12): 64-72. |

| [7] | QIU Zhibin, LU Zuwen, WANG Haixiang, et al. Recognition of Bird Sounds Related to Power Grid Faults Based on Mel Spectrogram and Convolutional Neural Network [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(2): 129-136. |

| [8] | YU Lubin, TIAN Lianfang, DU Qiliang. Object Tracking Algorithm Based on Multi-Stream Attention Siamese Network [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(12): 30-40. |

| [9] | ZHANG Xiangzhu, ZHANG Lijia, SONG Yifan, et al. Obstacle Avoidance Algorithm for Unmanned Aerial Vehicle Vision Based on Deep Learning [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(1): 101-108, 131. |

| [10] | HUANG Min QI Haitao JIANG Chunlin. Coupled Collaborative Filtering Model Based on Attention Mechanism [J]. Journal of South China University of Technology(Natural Science Edition), 2021, 49(7): 59-65. |

| [11] | ZHANG Yan, WU Luotian, WANG Nian, et al. 2D Footprint Classification Based on Multiple-Module Relation Network [J]. Journal of South China University of Technology (Natural Science Edition), 2021, 49(6): 66-76. |

| [12] | Qi LIU Bin Yu. Pavement Crack Recognition Algorithm Based on Transposed CNN [J]. Journal of South China University of Technology(Natural Science Edition), 2021, 49(12): 124-132. |

| [13] | LI Bo RAO Haobo. Salient Object Detection Based on Feature Enhancement in Complex Scene [J]. Journal of South China University of Technology (Natural Science Edition), 2021, 49(11): 135-144. |

| [14] | GENG Qinghua, LIU Weiming. A Fast and Stable Algebraic Solution to Perspective-Three-Point Problem [J]. Journal of South China University of Technology(Natural Science Edition), 2021, 49(1): 58-64,73. |

| [15] | DU Qiliang, HUANG Liguang, TIAN Lianfang, et al. Recognition of Passengers'Abnormal Behavior on Escalator Based on Video Monitoring [J]. Journal of South China University of Technology (Natural Science Edition), 2020, 48(8): 10-21. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||