Journal of South China University of Technology(Natural Science Edition) ›› 2022, Vol. 50 ›› Issue (12): 41-48.doi: 10.12141/j.issn.1000-565X.220095

Special Issue: 2022年计算机科学与技术

• Computer Science & Technology • Previous Articles Next Articles

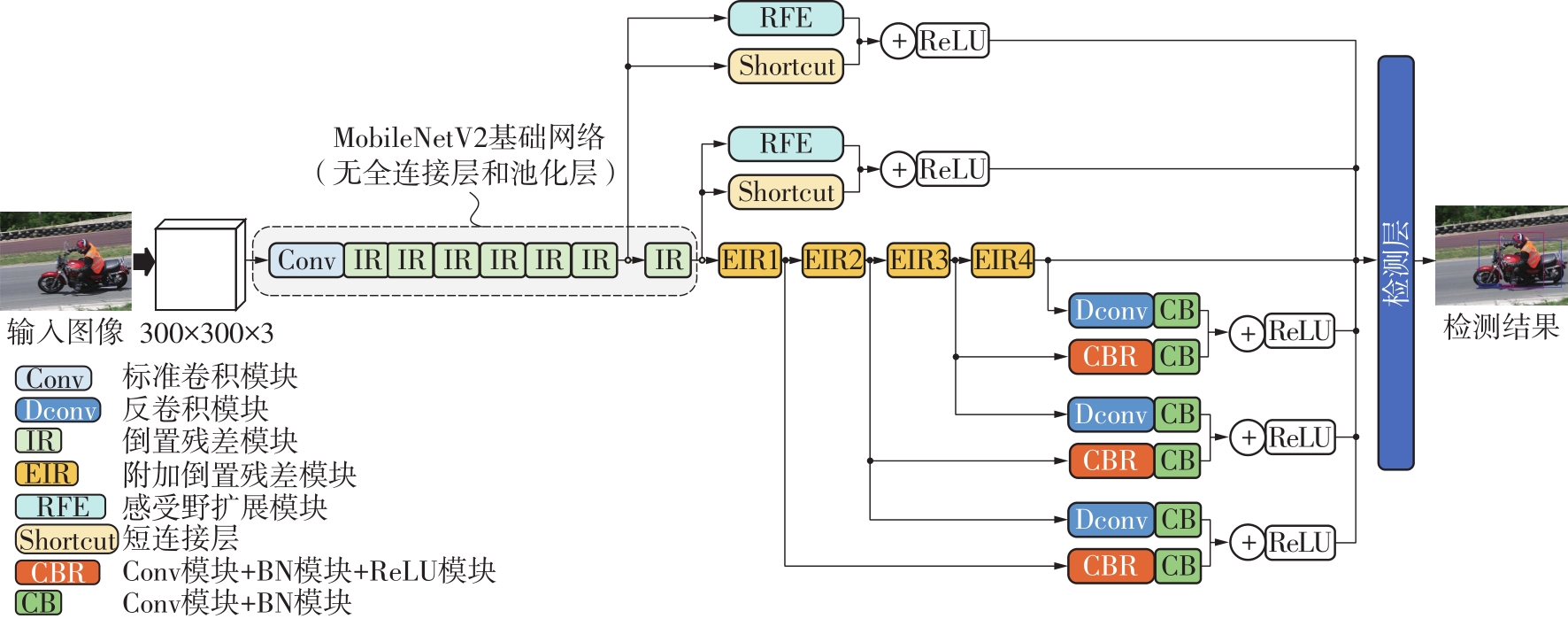

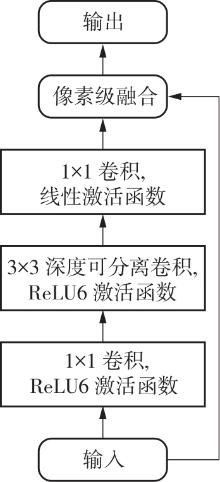

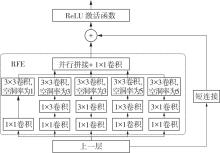

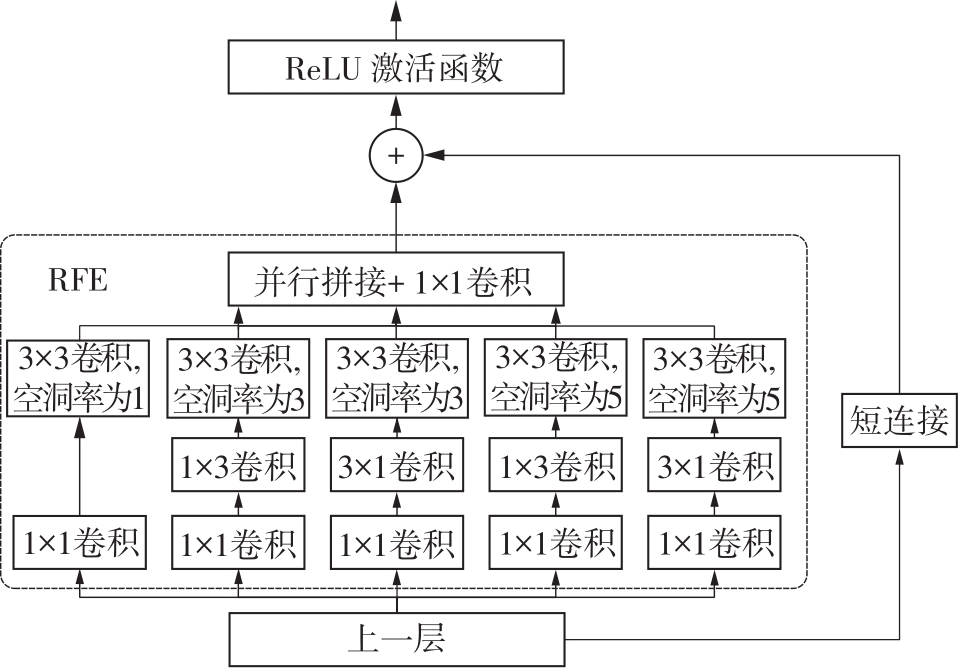

Lightweight Object Detection Combined with Multi-Scale Dilated-Convolution and Multi-Scale Deconvolution

YI Qingming1,2 LÜ Renyi1 SHI Min1 LUO Aiwen1

- 1.College of Information Science and Technology, Jinan University, Guangzhou 510632, Guangdong, China

2.Techtotop Microeletronics Technology Co. , Ltd. , Guangzhou 510663, Guangdong, China

-

Received:2022-03-06Online:2022-12-25Published:2022-04-08 -

Contact:骆爱文(1986-),女,博士,讲师,主要从事机器视觉与智能IC设计研究。 E-mail:luoaiwen@jnu.edu.cn. -

About author:易清明(1965-),女,博士,教授,主要从事通信信号处理及SoC设计、人工智能SoC设计研究.E-mail:tyqm@jnu.edu.cn. -

Supported by:the National Natural Science Foundation of China(62002134);the Basic and Applied Basic Research Foundation of Guangdong Province(2020A1515110645);the Project of Key Laboratory of New Semiconductors and Devices of Guangdong Province(2021KSY001)

CLC Number:

Cite this article

YI Qingming, LÜ Renyi, SHI Min, et al. Lightweight Object Detection Combined with Multi-Scale Dilated-Convolution and Multi-Scale Deconvolution[J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(12): 41-48.

share this article

Table 4

Performance comparison with different networks"

| 数据集 | 网络模型 | mAP/% | 检测速度/(f·s-1) | 参数量/106 |

|---|---|---|---|---|

| KITTI | SSD | 55.9 | 49 | 26.79 |

| SqueezeNet-SSD | 48.1 | 104 | 5.04 | |

| RFB Net | 61.3 | 88 | 34.50 | |

| Tiny-YOLOv2 | 41.9 | 29 | 15.10 | |

| LVP-DN | 56.6 | 63 | 6.56 | |

| MDDNet | 58.7 | 55 | 7.21 | |

| Pascal VOC | SSD | 74.3 | 46 | 26.79 |

| SqueezeNet-SSD | 64.3 | 100 | 5.04 | |

| RFB Net | 80.5 | 83 | 34.50 | |

| Tiny-YOLOv2 | 57.1 | 25 | 15.10 | |

| LVP-DN | 73.2 | 60 | 6.56 | |

| MDDNet | 76.0 | 52 | 7.21 |

| 1 | SCHROFF F, KALENICHENKO D, PHILBIN J .FaceNet:a unified embedding for face recognition and clustering [C]∥ Proceedings of 2015 IEEE Conference on Computer Vision and Pattern Recognition.Boston:IEEE,2015:815-823. |

| 2 | REDMON J, FARHADI A .YOLO9000:better,faster,stronger [C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:7263-7271. |

| 3 | CHEN X, XIANG S, LIU C L,et al .Vehicle detection in satellite images by hybrid deep convolutional neural networks [J].IEEE Geoscience and Remote Sensing Letters,2014,11(10):1797-1801. |

| 4 | LITJENS G, KOOI T, BEJNORDI B E,et al .A survey on deep learning in medical image analysis [J].Medical Image Analysis,2017,42(9):60-88. |

| 5 | CHEN X, KUNDU K, ZHANG Z,et al .Monocular 3D object detection for autonomous driving [C]∥ Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:2147-2156. |

| 6 | KIM K Y, CHOI Y W, KIM J H,et al .Development of passenger safety system based on the moving object detection and test result on the real vehicle [C]∥ Proceedings of the Eighth International Conference on Ubiquitous and Future Networks.Vienna:IEEE,2016:64-66. |

| 7 | LIU W, ANGUELOV D, ERHAN D,et al .SSD:single shot multibox detector [C]∥ Proceedings of the 14th European Conference on Computer Vision.Amsterdam:Springer,2016:21-37. |

| 8 | GIRSHICK R, DONAHUE J, DARRELL T,et al .Rich feature hierarchies for accurate object detection and semantic segmentation [C]∥ Proceedings of 2014 IEEE Conference on Computer Vision and Pattern Recognition.Columbus:IEEE,2014:580-587. |

| 9 | IANDOLA F N, HAN S, MOSKEWICZ M W,et al .SqueezeNet:AlexNet-level accuracy with 50x fewer para-meters and <0.5 MB model size [EB/OL].(2016-02-24) [2021-10-11].. |

| 10 | HOWARD A G, ZHU M, CHEN B,et al .MobileNets:efficient convolutional neural networks for mobile vision applications [EB/OL].(2017-04-17)[2021-10-11].. |

| 11 | ZHANG X, ZHOU X, LIN M,et al .ShuffleNet:an extremely efficient convolutional neural network for mobile devices [C]∥ Proceedings of 2018 IEEE/CVF Confe-rence on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:6848-6856. |

| 12 | MA N, ZHANG X, ZHENG H T,et al .ShuffleNet V2:practical guidelines for efficient CNN architecture design [C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:122-138. |

| 13 | SANDLER M, HOWARD A, ZHU M,et al .MobileNetV2:inverted residuals and linear bottlenecks [C]∥ Proceedings of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:4510-4520. |

| 14 | FU C Y, LIU W, RANGA A,et al .DSSD:deconvolutional single shot detector [EB/OL].(2017-01-23)[2021-10-11].. |

| 15 | LIU S, HUANG D .Receptive field block net for accurate and fast object detection [C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:404-419. |

| 16 | SIMONYAN K, ZISSERMAN A .Very deep convolutional networks for large-scale image recognition [EB/OL].(2014-09-04)[2021-10-11].. |

| 17 | 吴帅,徐勇,赵东宁 .基于深度卷积网络的目标检测综述 [J].模式识别与人工智能,2018,31(4):335-346. |

| WU Shuai, XU Yong, ZHAO Dongning .Survey of object detection based on deep convolutional networks [J].Pattern Recognition and Artificial Intelligence,2018,31(4): 335-346. | |

| 18 | LIN T Y, DOLLAR P, GIRSHICK R,et al .Feature pyramid networks for object detection [C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:2117-2125. |

| 19 | GLOROT X, BORDES A, BENGIO Y .Deep sparse rectifier neural networks [J].Journal of Machine Learning Research,2011,15:315-323. |

| 20 | 朱槐雨,李博 .单阶段多框检测器无人机航拍目标识别方法 [J].计算机应用,2021,41(11):3234-3241. |

| ZHU Huaiyu, LI Bo .Single shot multibox detector recognition method for aerial targets of unmanned aerial vehicle [J].Journal of Computer Applications,2021,41(11):3234-3241. | |

| 21 | 梁京章,黄星舒,吴丽娟,等 .基于KPCA和改进K-means的电力负荷曲线聚类方法 [J].华南理工大学学报(自然科学版),2020,48(6):143-150. |

| LIANG Jingzhang, HUANG Xingshu, WU Lijuan,et al .Clustering method of power load profiles based on KPCA and improved K-means [J].Journal of South China University of Technology(Natural Science Edition),2020,48(6):143-150. | |

| 22 | VICENTE S, CARREIRA J, AGAPITO L,et al .Reconstructing Pascal VOC [C]∥ Proceedings of 2014 IEEE Conference on Computer Vision and Pattern Re-cognition.Columbus:IEEE,2014:41-48. |

| 23 | GEIGER A, LENZ P, URTASUN R .Are we ready for autonomous driving?The KITTI vision benchmark suite [C]∥ Proceedings of 2012 IEEE Conference on Computer Vision and Pattern Recognition.Providence:IEEE,2012:3354-3361. |

| 24 | 郑冬,李向群,许新征 .基于轻量化SSD的车辆及行人检测网络 [J].南京师大学报(自然科学版),2019,42(1):73-81. |

| ZHENG Dong, LI Xiangqun, XU Xinzheng .Vehicle and pedestrian detection model based on lightweight SSD [J].Journal of Nanjing Normal University(Natural Science Edition),2019,42(1):73-81. |

| [1] | LI Jiachun, LI Bowen, LIN Weiwei. AdfNet: An Adaptive Deep Forgery Detection Network Based on Diverse Features [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(9): 82-89. |

| [2] | GUO Enqiang, FU Xinsha. Dropped Object Detection Method Based on Feature Similarity Learning [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(6): 30-41. |

| [3] | ZHAO Rongchao, WU Baili, CHEN Zhuyun, WEN Kairu, ZHANG Shaohui, LI Weihua. Graph Neural Network for Fault Diagnosis with Multi-Scale Time-Spatial Information Fusion Mechanism [J]. Journal of South China University of Technology(Natural Science Edition), 2023, 51(12): 42-52. |

| [4] | DU Qiliang, XIANG Zhaoyi, TIAN Lianfang. Real Time Statistics Method of Escalator Passenger Flow for Embedded Devices [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(6): 60-70. |

| [5] | HUANG Huaiwei, HE Wanli, CAO Yajun. Energy Harvesting Characteristics of Preloaded Piezoelectric Beams Based on Multi-Scale Approach [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(5): 118-126. |

| [6] | YANG Chunling YANG Yajing. A Deep Neural Network Based on Layer-by-Layer Fusion of Multi-Scale Features for No-Reference Image Quality Assessment [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(4): 81-89,141. |

| [7] | YANG Jinsheng, CHEN Hongpeng, GUAN Xin, et al. A Multi-Scale Lightweight Brain Glioma Image Segmentation Network [J]. Journal of South China University of Technology(Natural Science Edition), 2022, 50(12): 132-141. |

| [8] | TAN Guang LI Changhao ZHAN Zhaohuan. Adaptive Scheduling Algorithm for Object Detection and Tracking Based on Device-Cloud Collaboration [J]. Journal of South China University of Technology (Natural Science Edition), 2021, 49(7): 86-93. |

| [9] | YANG Yi, TAN Jiancheng, JIN Bochong, et al. Multi-Scale Simulation on the Wind Field for Complex Terrain Based on Coupled WRF and CFD Techniques [J]. Journal of South China University of Technology (Natural Science Edition), 2021, 49(5): 65-73,83. |

| [10] | CHEN Ling, DU Anlin, TANG Yukuo, et al. Effects of Structural Evolution of Starch Materials with Different Gelatinization Degrees on Materials' Printability of Hot-Extrusion 3D Printing [J]. Journal of South China University of Technology(Natural Science Edition), 2021, 49(3): 62-70,87. |

| [11] | LI Bo RAO Haobo. Salient Object Detection Based on Feature Enhancement in Complex Scene [J]. Journal of South China University of Technology (Natural Science Edition), 2021, 49(11): 135-144. |

| [12] | LIU Weiming, WEN Junrui, ZHENG Zhongxing, et al. DifferentNet: Neural Network for Foreign Objects Foreground Detection in Metro [J]. Journal of South China University of Technology(Natural Science Edition), 2021, 49(10): 11-21,40. |

| [13] | WU Qiuxia, LI Lingmin. 3D Object Detection Based on Point Cloud Bird's Eye View Remapping [J]. Journal of South China University of Technology(Natural Science Edition), 2021, 49(1): 39-46. |

| [14] | SU Fenghua FENG Wenying YUAN Xi. Numerical Simulation of an Oil Cooler Based on Multi-Scale Method [J]. Journal of South China University of Technology (Natural Science Edition), 2020, 48(5): 112-117. |

| [15] | LIU Jieping, WEN Junwen, LIANG Yaling. Monocular Image Depth Estimation Based on Multi-Scale Attention Oriented Network [J]. Journal of South China University of Technology (Natural Science Edition), 2020, 48(12): 52-62. |

| Viewed | ||||||

|

Full text |

|

|||||

|

Abstract |

|

|||||