Journal of South China University of Technology(Natural Science) >

Image Inpainting via Residual Attention Fusion and Gated Information Distillation

Received date: 2022-01-13

Online published: 2022-08-05

Supported by

the National Natural Science Foundation of China(62166048);the Applied Basic Research Project of Yunnan Province(2018FB102)

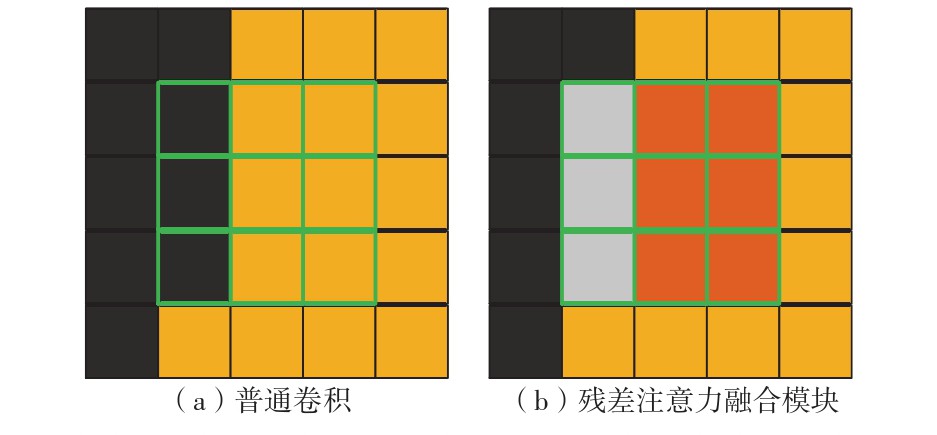

Image inpainting is of great significance and value in computer vision tasks. In recent years, image inpainting models based on deep learning have been widely used in this field. However, the existing deep learning image inpainting models have the problems of insufficient utilization of the effective information in the damaged image and interference by the mask information in the damaged image, which leads to the loss of part of the structure and fuzzy part of the details of the repaired image. Therefore, this paper proposed an image inpainting model based on a residual attention fusion and gated information distillation. Firstly, the model consists of two parts, the generator and the discriminator. The backbone structure of the generator uses the U-Net network and consists of two parts, the encoder and the decoder. The discriminator uses a Markov discriminator and consists of six convolutional layers. Then, the residual attention fusion block was used in the encoder and decoder, respectively, to enhance the utilization of valid information in the broken image and reduce the interference of mask information. Finally, a gated information distillation block was embedded in the skip connection of the encoder and decoder to further extract the low-level features in the damaged image. The experimental results on public face and street view datasets show that, the proposed model has better repair performance in semantic structure and texture details; the proposed model outperforms the five contrast models in structural similarity, peak signal to noise ratio, mean absolute error, mean square error and Fréchet distance indicators, demonstrating that the inpainting quality of the proposed model is superior to the compared models.

YU Ying, HE Penghao, XU Chaoyue . Image Inpainting via Residual Attention Fusion and Gated Information Distillation[J]. Journal of South China University of Technology(Natural Science), 2022 , 50(12) : 49 -59 . DOI: 10.12141/j.issn.1000-565X.220025

| 1 | BERTALMIO M, SAPIRO G, CASELLES V,et al .Image inpainting[C]∥ Proceedings of the 27th Annual Conference on Computer Graphics and Interactive Techniques.New York:ACM,2000:417-424. |

| 2 | BARNES C, SHECHTMAN E, FINKELSTEIN A,et al .PatchMatch:a randomized correspondence algorithm for structural image editing[J].ACM Transactions on Gra-phics,2009,28(3):24/1-11. |

| 3 | RONNEBERGER O, FISCHER P, BROX T .U-Net:convolutional networks for biomedical image segmentation [C]∥ Proceedings of the 18th International Conference on Medical Image Computing and Computer-Assisted Intervention.Munich:Springer,2015:234-241. |

| 4 | IIZUKA S, SIMO-SERRA E, ISHIKAWA H .Globally and locally consistent image completion[J].ACM Transactions on Graphics,2017,36(4):107/1-14. |

| 5 | YAN Z, LI X, LI M,et al .Shift-Net:image inpainting via deep feature rearrangement[C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:3-19. |

| 6 | 杨昊,余映 .利用通道注意力与分层残差网络的图像修复[J].计算机辅助设计与图形学学报,2021,33(5):671-681. |

| 6 | YANG Hao, YU Ying .Image inpainting using channel attention and hierarchical residual network[J].Journal of Computer-Aided Design and Computer Graphics,2021,33(5):671-681. |

| 7 | LIU H, JIANG B, XIAO Y,et al .Coherent semantic attention for image inpainting[C]∥ Proceedings of 2019 IEEE/CVF International Conference on Computer Vision.Seoul:IEEE,2019:4169-4178. |

| 8 | XIE C, LIU S, LI C,et al .Image inpainting with learnable bidirectional attention maps[C]∥ Procee-dings of 2019 IEEE/CVF International Conference on Computer Vision.Seoul:IEEE,2019:8857-8866. |

| 9 | LIU G, REDA F A, SHIH K J,et al .Image inpain-ting for irregular holes using partial convolutions[C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:89-105. |

| 10 | YU J, LIN Z, YANG J,et al .Free-form image inpain-ting with gated convolution[C]∥ Proceedings of 2019 IEEE/CVF International Conference on Computer Vision.Seoul:IEEE,2019:4470-4479. |

| 11 | LIU H, JIANG B, SONG Y,et al .Rethinking image inpainting via a mutual encoder-decoder with feature equalizations[C]∥ Proceedings of the 16th European Conference on Computer Vision.Glasgow:Springer,2020:725-741. |

| 12 | LI J, WANG N, ZHANG L,et al .Recurrent feature reasoning for image inpainting[C]∥ Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE,2020:7757-7765. |

| 13 | NAZERI K,NG E, JOSEPH T,et al .EdgeConnect:structure guided image inpainting using edge prediction [C]∥ Proceedings of 2019 IEEE/CVF International Conference on Computer Vision.Seoul:IEEE,2019:3265-3274. |

| 14 | MA Y, LIU X, BAI S,et al .Coarse-to-fine image inpainting via region-wise convolutions and non-local correlation[C]∥ Proceedings of the 28th International Joint Conference on Artificial Intelligence.Macao:AAAI,2019:3123-3129. |

| 15 | WANG N, LI J, ZHANG L,et al .MUSICAL:multi-scale image contextual attention learning for inpainting [C]∥ Proceedings of the 28th International Joint Confe-rence on Artificial Intelligence.Macao:AAAI,2019:3748-3754. |

| 16 | IOFFE S, SZEGEDY C .Batch normalization:accelera-ting deep network training by reducing internal covariate shift[C]∥ Proceedings of the 32nd International Conference on Machine Learning.Lille:JMLR.org,2015:448-456. |

| 17 | WOO S, PARK J, LEE J Y,et al .CBAM:convolutional block attention module[C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:3-19. |

| 18 | PARK J,WOO S, LEE J Y,et al .BAM:bottleneck attention module[C]∥ Proceedings of 2018 British Machine Vision Conference.Newcastle:Springer,2018:147/1-14. |

| 19 | KARRAS T, AILA T, LAINE S,et al .Progressive growing of GANs for improved quality,stability,and variation[C]∥ Proceedings of the 6th International Confe-rence on Learning Representations.Vancouver:Elsevier,2018:1-26. |

| 20 | DOERSCH C, SINGH S, GUPTA A,et al .What makes Paris look like Paris? [J].Communications of the ACM,2015,58(12):103-110. |

| 21 | WANG Y, TAO X, QI X J,et al .Image inpainting via generative multi-column convolutional neural networks [C]∥ Proceedings of the 32nd International Conference on Neural Information Processing Systems.Montreal:MIT Press,2018:329-338. |

| 22 | ZENG Y, FU J, CHAO H,et al .Learning pyramid-context encoder network for high-quality image inpainting[C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:1486-1494. |

| 23 | ZHENG C, CHAM T J, CAI J .Pluralistic image completion [C]∥ Proceedings of 2019 IEEE/CVF Con-ference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:1438-1447. |

| 24 | WADHWA G, DHALL A, MURALA S,et al .Hyperrea-listic image inpainting with hypergraphs [C]∥ Procee-dings of 2021 IEEE Winter Conference on Applications of Computer Vision.Waikoloa:IEEE,2021:3911-3920. |

/

| 〈 |

|

〉 |