Journal of South China University of Technology(Natural Science) >

Information Retrieval Re-Ranking Method Based on Bidirectional Text Expansion

Received date: 2024-10-09

Online published: 2025-01-13

Supported by

the National Natural Science Foundation of China(62472192)

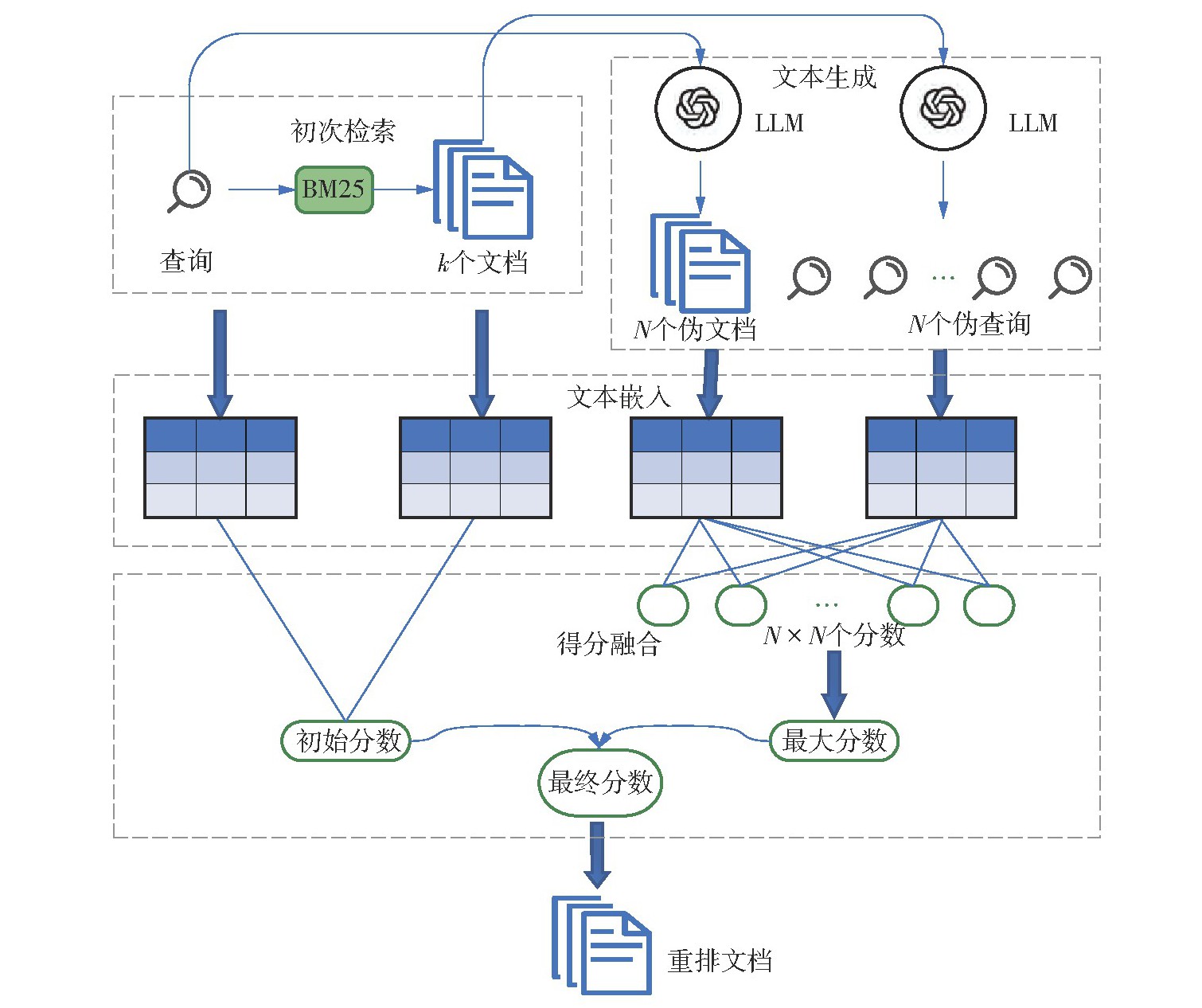

With the rapid development of large language models (LLMs), remarkable progress has been made in both text matching and text expansion technologies in information retrieval. As two important methods for enhancing text representation, query expansion and document expansion have been widely applied in modern information retrieval systems. Currently, mainstream text expansion methods primarily rely on large language models. However, there are obvious differences in language diversity and style between the text generated by these models and the text created manually. These differences may affect the accuracy of calculating the query-document relevance, ultimately leading to a decline in the performance of the entire information retrieval system. To address this issue, this paper proposed an information retrieval re-ranking method based on bidirectional text expansion (BTE-IRRM). First, zero-shot prompting was used to enable the large language model to generate pseudo-queries for documents and pseudo-documents for queries. Then, the semantic similarity between these pseudo-queries and pseudo-documents was calculated. Finally, the similarity scores of the original query-document and the semantic similarity scores of the pseudo-query-pseudo-document were weighted and fused to obtain the final document ranking result. To validate the effectiveness of the proposed method, experiments were conducted on two public datasets (DL19 and DL20). Experimental results demonstrate that compared with the existing baseline methods, the BTE-IRRM method has achieved significant improvements in multiple evaluation indicators. Therefore, the bidirectional text expansion method proposed in this paper can further enhance the relevance matching between queries and documents, thereby improving the performance of the entire information retrieval system.

TU Xinhui , GUO Cong , ZONG Yuhang . Information Retrieval Re-Ranking Method Based on Bidirectional Text Expansion[J]. Journal of South China University of Technology(Natural Science), 2025 , 53(9) : 59 -67 . DOI: 10.12141/j.issn.1000-565X.240499

| [1] | LEI Y, CAO Y, ZHOW T,et al .Corpus-steered query expansion with large language models[C]∥ Proceedings of the 18th Conference of the European Chapter of the Association for Computational Linguistics.St.Julian:Association for Computational Linguistics,2024:393-401. |

| [2] | JAGERMAN R, ZHUANG H, QIN Z,et al .Query expansion by prompting large language models[EB/OL].(2023-05-05)[2024-07-10].. |

| [3] | 孟怡悦,彭蓉,吕其标 .一种结合标签分类和语义查询扩展的文本素材推荐方法[J].计算机科学,2023,50(1):76-86. |

| MENG Yiyue, PENG Rong, Qibiao Lü .Text material recommendation method combining label classification and semantic query expansion[J].Computer Science,2023,50(1):76-86. | |

| [4] | 刘高军,方晓,段建勇 .基于深度语义信息的查询扩展[J].计算机应用,2020,40(11):3192-3197. |

| LIU Gaojun, FANG Xiao, DUAN Jianyong .Query extension based on deep semantic information[J].Journal of Computer Applications,2020,40(11):3192-3197. | |

| [5] | EFTHIMIADIS E N .Query expansion[J].Annual Review of Information Science and Technology,1996,31:121-187. |

| [6] | LEVOW G A, OARD D W, RESNIK P .Dictionary-based techniques for cross-language information retrieval[J].Information processing & management,2005,41(3):523-547. |

| [7] | CAO G, NIE J Y, GAO J,et al .Selecting good expansion terms for pseudo-relevance feedback[C]∥ Proceedings of the 31st Annual International ACM SIGIR Conference on Research and Development in Information Retrieval.Singapore:ACM,2008:243-250. |

| [8] | ZHAO W X, ZHOU K, LI J,et al .A survey of large language models[EB/OL].(2023-03-31)[2024-07-10].. |

| [9] | WANG L, YANG N, WEI F .Query2doc:query expansion with large language models[C]∥ Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing.Singapore:Association for Computational Linguistics,2023:9414-9423. |

| [10] | YAN R, GAO G .Pseudo-based relevance analysis for information retrieval[C]∥ Proceedings of 2017 IEEE the 29th International Conference on Tools with Artificial Intelligence.Boston:IEEE,2017:1259-1266. |

| [11] | ROBERTSON S E, WALKER S, JONES S,et al .Okapi at TREC-3[C]∥ Proceedings of of the Third Text Retrieval Conference.Gaithersburg:NIST,1995:109-123. |

| [12] | GOSPODINOV M, MacAVANEY S, MACDONALD C .Doc2Query-:when less is more[C]∥ Proceedings of the 45th European Conference on Information Retrieval.Dublin:Springer,2023:414-422. |

| [13] | NOGUEIRA R, LIN J .From doc2query to docTTTTTquery[EB/OL].(2019-12-01)[2024-07-10].. |

| [14] | MA X, PRADEEP R, NOGUEIRA R,et al .Document expansion baselines and learned sparse lexical representations for MS MARCO V1 and V2[C]∥ Proceedings of the 45th International ACM SIGIR Conference on Research and Development in Information Retrieval.Madrid:ACM,2022:3187-3197. |

| [15] | NOGUEIRA R, YANG W, LIN J,et al .Document expansion by query prediction [EB/OL].(2019-04-17)[2024-07-10].. |

| [16] | TANG H, SUN X, JIN B,et al .Improving document representations by generating pseudo query embeddings for dense retrieval[C]∥ Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing.[S.l.]:Association for Computational Linguistics,2021:5054-5064. |

| [17] | ZHU J, WANG M, YANG E,et al .A comparative study of language between artificial intelligence and human:a case study of ChatGPT[C]∥ Proceedings of the 22nd Chinese National Conference on Computational Linguistics.Harbin:Chinese Information Processing Society of China,2023:523-534. |

| [18] | RAHUTOMO F, KITASUKA T, ARITSUGI M .Test collection recycling for semantic text similarity[C]∥ Proceedings of the 14th International Conference on Information Integration and Web-based Applications & Services.Bali:ACM,2012:286-289. |

| [19] | HSU D F, TAKSA I .Comparing rank and score combination methods for data fusion in information retrieval[J].Information Retrieval,2005,8:449-480. |

| [20] | ZAMFIRESCU-PEREIRA J D, WONG R Y, HARTMANN B,et al .Why Johnny can’t prompt:how non-AI experts try (and fail) to design LLM prompts[C]∥ Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems.Hamburg:ACM,2023:437/1-21. |

| [21] | WANG W, ZHENG V W, YU H,et al.A survey of zero-shot learning:settings,methods,and applications[J].ACM Transactions on Intelligent Systems and Technology,2019,10(2):13/1-37. |

| [22] | CRASWELL N, MITRA B, YILMAZ E,et al .Overview of the TREC 2019 deep learning track[EB/OL].(2020-03-17)[2024-07-10].. |

| [23] | PALANGI H, DENG L, SHEN Y,et al .Deep sentence embedding using long short-term memory networks:analysis and application to information retrieval[J].IEEE/ACM Transactions on Audio,Speech,and Language Processing,2016,24(4):694-707. |

| [24] | OpenAI .Text-embedding-3-small[EB/OL].(2024-01-25)[2024-07-10].. |

| [25] | CRASWELL N, MITRA B, YILMAZ E,et al .Overview of the TREC 2020 deep learning track[EB/OL].(2021-02-15)[2024-07-10].. |

| [26] | GüNTHER M,ONG J, MOHR I,et al .Jina embeddings 2:8192-token general-purpose text embeddings for long documents[EB/OL].(2023-10-30)[2024-07-10].. |

| [27] | JIA P, LIU Y, ZHAO X,et al .MILL:mutual verification with large language models for zero-shot query expansion[C]∥ Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics:Human Language Technologies.Mexico City:Association for Computational Linguistics,2024:2498-2518. |

| [28] | OpenAI .Introducing ChatGPT[EB/OL].(2022-11-30)[2024-07-10].. |

| [29] | RAFFEL C, SHAZEER N, ROBERTS A,et al .Exploring the limits of transfer learning with a unified text-to-text transformer[J].Journal of Machine Learning Research,2020,21:5485-5551. |

| [30] | AI@Meta .Llama 3 model card[EB/OL].(2024-04-19)[2024-07-10].. |

| [31] | WANG Y, WANG L, LI Y,et al .A theoretical analysis of NDCG type ranking measures[C]∥ Proceedings of the 26th Annual Conference on Learning Theory.Princeton:ML Research Press,2013:25-54. |

/

| 〈 |

|

〉 |