LiDAR-Binocular Camera Calibration by Minimizing LiDAR Isotropic Error

Received date: 2022-04-06

Online published: 2022-07-15

Supported by

the National Natural Science Foundation of China(51875204);the Natural Science Foundation of Guangdong Province(2020A1515011543)

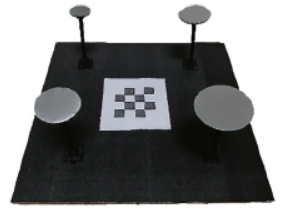

Multi-modal data fusion of LiDAR (Laser Imaging, Detection, and Ranging) and binocular camera is important in the research on 3D reconstruction. The two sensors have their own advantages and disadvantages, and they can complement each other through data fusion to obtain better reconstruction results. In order to achieve data fusion, firstly it is necessary to unify the two data into the same coordinate system. The calibration results of the external parameters between the LiDAR and the camera are very important to 3D reconstruction. Due to sparse LiDAR point cloud and its positioning error, it is a challenge to extract feature points accurately for constructing accurate point correspondences when calibrating extrinsic parameters between LiDAR and stereo camera. In addition, most calibration methods ignore that LiDAR works on spherical coordinate system and directly use the Cartesian coordinate measurement results for calibration, which introduces anisotropic coordinates error and reduces the calibration accuracy. This paper proposed a calibration method by minimizing isotropic spherical coordinate error. Firstly, a novel calibration object using centroid feature points was proposed to improve the extraction accuracy of feature points. Secondly, the anisotropic LiDAR Cartesian coordinate error were convert into the isotropic spherical coordinate error, and the extrinsic parameters were solved through directly minimizing the spherical coordinate error. The experiments show that the proposed method has advantages over the anisotropic weighting method. The method ensures that the solution is globally optimal and the number of calibration samples required is greatly reduced on the premise of sacrificing some accuracy. With the optimal calibration error of 2.75 mm, the amount of calibration data can be reduced by about 54.5% by sacrificing 3.6% accuracy using the proposed method.

Key words: LiDAR; binocular camera; anisotropic; calibration

Zhong CHEN , Zichen LIU , Xianmin ZHANG . LiDAR-Binocular Camera Calibration by Minimizing LiDAR Isotropic Error[J]. Journal of South China University of Technology(Natural Science), 2023 , 51(2) : 1 -9 . DOI: 10.12141/j.issn.1000-565X.220178

| 1 | SCHENK T, CSATHó B .Fusion of LIDAR data and aerial imagery for a more complete surface description[J].International Archives of Photogrammetry Remote Sensing and Spatial Information Sciences,2002,34(3/A):310-317. |

| 2 | PARK K, KIM S, SOHN K .High-precision depth estimation using uncalibrated LiDAR and stereo fusion[J].IEEE Transactions on Intelligent Transportation Systems,2019,21(1):321-335. |

| 3 | YANG S, LI B, LIU M,et al .Heterofusion:dense scene reconstruction integrating multi-sensors[J].IEEE Transactions on Visualization and Computer Graphics,2019,26(11):3217-3230. |

| 4 | ALI M K, RAJPUT A, SHAHZAD M,et al .Multi-sensor depth fusion framework for real-time 3D reconstruction[J].IEEE Access,2019,7:136471-136480. |

| 5 | ZHEN W, HU Y, LIU J,et al .A joint optimization approach of lidar-camera fusion for accurate dense 3-d reconstructions[J].IEEE Robotics and Automation Letters,2019,4(4):3585-3592. |

| 6 | SNAVELY N, SEITZ S M, SZELISKI R .Photo tourism:exploring photo collections in 3D[J].Acm Transactions on Graphics,2006,25(3):835-846. |

| 7 | FURUKAWA Y, PONCE J .Accurate,dense,and robust multiview stereopsis[J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2009,32(8):1362-1376. |

| 8 | ESTEBAN C H, SCHMITT F .Silhouette and stereo fusion for 3D object modeling[J].Computer Vision and Image Understanding,2004,96(3):367-392. |

| 9 | KADA M, MCKINLEY L .3D building reconstruction from LiDAR based on a cell decomposition approach[J].International Archives of Photogrammetry,Remote Sensing and Spatial Information Sciences,2009,38(Part 3):W4. |

| 10 | YI C, ZHANG Y, WU Q,et al .Urban building reconstruction from raw LiDAR point data[J].Computer-Aided Design,2017,93:1-14. |

| 11 | UNNIKRISHNAN R, HEBERT M .Fast extrinsic calibration of a laser rangefinder to a camera[M].Pittsburgh,PA,USA:Robotics Institute,2005. |

| 12 | ZHOU L .A new minimal solution for the extrinsic calibration of a 2D LIDAR and a camera using three plane-line correspondences[J].IEEE Sensors Journal,2013,14(2):442-454. |

| 13 | FU B, WANG Y, DING X,et al .LiDAR-camera calibration under arbitrary configurations:observability and methods[J].IEEE Transactions on Instrumentation and Measurement,2019,69(6):3089-3102. |

| 14 | ZHOU L, LI Z, KAESS M .Automatic extrinsic calibration of a camera and a 3d lidar using line and plane correspondences[C]∥2018 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS).Madrid,Spain:IEEE,2018:5562-5569. |

| 15 | LI Y, RUICHEK Y, CAPPELLE C .Optimal extrinsic calibration between a stereoscopic system and a LIDAR[J].IEEE Transactions on Instrumentation and Measurement,2013,62(8):2258-2269. |

| 16 | FREMONT V, BONNIFAIT P .Extrinsic calibration between a multi-layer lidar and a camera[C]∥2008 IEEE International Conference on Multisensor Fusion and Integration for Intelligent Systems.Seoul,Korea (South):IEEE,2008:214-219. |

| 17 | GUINDEL C, BELTRáN J, MARTíN D,et al .Automatic extrinsic calibration for lidar-stereo vehicle sensor setups[C]∥2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC).Yokohama,Japan:IEEE,2017:1-6. |

| 18 | PARK Y, YUN S, WON C S,et al .Calibration between color camera and 3D LIDAR instruments with a polygonal planar board[J].Sensors,2014,14(3):5333-5353. |

| 19 | CAI H, PANG W, CHEN X,et al .A novel calibration board and experiments for 3D LiDAR and camera calibration[J].Sensors,2020,20(4):1130. |

| 20 | HUANG J K, GRIZZLE J W .Improvements to target-based 3D LiDAR to camera calibration[J].IEEE Access,2020,8:134101-134110. |

| 21 | AN P, MA T, YU K,et al .Geometric calibration for LiDAR-camera system fusing 3D-2D and 3D-3D point correspondences[J].Optics Express,2020,28(2):2122-2141. |

| 22 | HORAUD R, HANSARD M, EVANGELIDIS G,et al .An overview of depth cameras and range scanners based on time-of-flight technologies[J].Machine Vision and Applications,2016,27(7):1005-1020. |

| 23 | MAIER-HEIN L, FRANZ A M, DOS SANTOS T R,et al .Convergent iterative closest-point algorithm to accomodate anisotropic and inhomogenous localization error[J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2011,34(8):1520-1532. |

| 24 | BALACHANDRAN R, FITZPATRICK J M .Iterative solution for rigid-body point-based registration with anisotropic weighting[C]∥Medical Imaging 2009:Visualization,Image-Guided Procedures,and Modeling.Florida,United States:SPIE Medical Imaging,2009:1051-1060. |

| 25 | WANG W, LIU Y, CHEN R,et al .A calibration method with anisotropic weighting for LiDAR and stereo camera system[C]∥2019 IEEE International Conference on Robotics and Biomimetics (ROBIO).Dali,China:IEEE,2019:422-426. |

/

| 〈 |

|

〉 |