收稿日期: 2023-05-18

网络出版日期: 2023-09-28

基金资助

广东省自然科学基金资助项目(2022A1515010806);广州市科技计划项目(2023B01J0046)

Multi-Object Recognition and 6-DoF Pose Estimation Based on Synthetic Datasets

Received date: 2023-05-18

Online published: 2023-09-28

Supported by

the Natural Science Foundation of Guangdong Province(2022A1515010806)

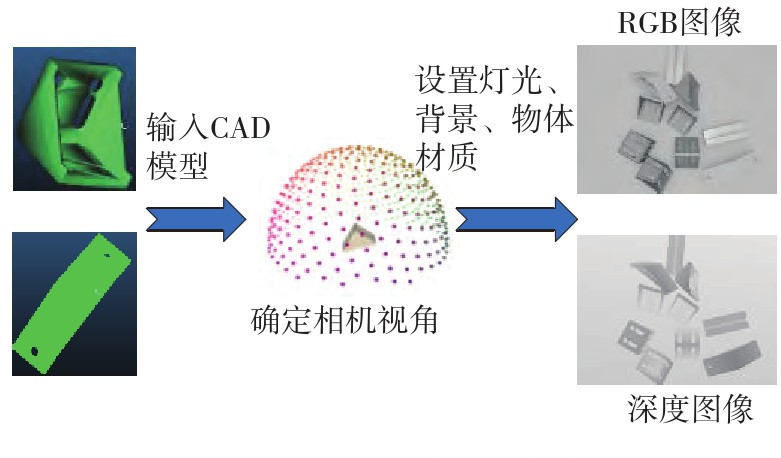

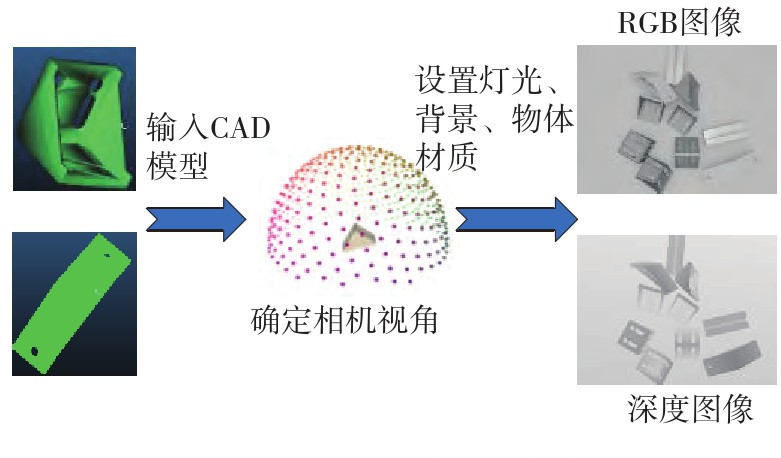

多目标识别及六自由度(6-DoF)位姿估计是实现物料无序堆放状态下机器人自动分拣的关键。近年来,基于深度神经网络的方法在目标识别及位姿估计领域受到广泛关注,但此类方法依赖大量训练样本,而样本的采集及标注费时费力,限制了其实用性。其次,当成像条件差、目标相互遮挡时,现有位姿估计方法无法保证结果的可靠性,进而导致抓取失败。为此,文中提出了一种基于合成数据样本的目标识别、分割及位姿估计方法。首先,以目标对象的3维(3D)几何模型为基础,利用3D图形编程工具生成虚拟场景的多视角RGB-D合成图像,并对生成的RGB图像及深度图像分别进行风格迁移和噪声增强,从而提高合成数据的真实感,以适应真实场景的检测需要;接着,利用合成数据集训练YOLOv7-mask实例分割模型,运用真实数据进行测试,结果验证了该方法的有效性;然后,以分割结果为基础,基于ES6D目标位姿估计模型,提出了一种在线姿态评估方法,以自动滤除严重失真的估计结果;最后,采用基于主动视觉的位姿估计校正策略,引导机械臂运动到新的视角重新检测,以解决因遮挡而导致位姿估计偏差的问题。在自行搭建的6自由度工业机器人视觉分拣系统上进行了实验,结果表明,文中提出的方法能较好地适应复杂环境下工件的识别与6-DoF姿态估计要求。

胡广华, 欧美彤, 李振东 . 基于合成数据集的多目标识别与6-DoF位姿估计[J]. 华南理工大学学报(自然科学版), 2024 , 52(4) : 42 -50 . DOI: 10.12141/j.issn.1000-565X.230327

Multi-object recognition and 6-DoF (degree of freedom) pose estimation are the key to achieve automatic sorting of robots in the state of unordered stacking of materials. In recent years, methods based on deep neural networks have received much attention in the multi-object recognition and 6-DoF pose estimation fields. Such methods rely on a large number of training samples, however, the collection and labeling of samples is time-consuming and laborious, which limits its application. In addition, when the imaging conditions are poor and the targets are occluded by each other, the existing pose estimation methods cannot guarantee the reliability of the results, resulting in grasping failures. To this end, this paper presented a method for target recognition, segmentation and pose estimation based on synthetic data samples. Firstly, multi-view RGB-D synthetic images of virtual scenes were generated using 3D graphics programming tools based on the 3D geometric models of the target objects, and then style transfer and noise enhancement was performed, respectively, on the generated RGB images and the depth images to improve their realism, so that they are suited for the detection in real scenes. Next, the YOLOv7-mask instance segmentation model was trained with synthetic datasets and tested by real data. The results demonstrate the effectiveness of the proposed method. Secondly, the ES6D model was utilized to estimate target poses based on the segmentation results, and an online posture evaluation method was proposed to automatically filter out severely distorted estimation results. Finally, a pose estimation correction strategy based on active vision technique was proposed to guide the robot arm to move to a new viewpoint for re-detection, which can effectively solve the problem of pose estimation deviation caused by occlusion. The above methods have been verified on a self-built 6-DoF industrial robot vision sorting system. The experimental results show that the proposed algorithm can well meet the requirements of recognition and 6-DoF posture estimation of common workpieces in complex environments.

| 1 | 翟敬梅,黄乐 .机器人无序分拣技术研究[J].包装工程艺术版,2022,43(8):66-75. |

| ZHAI Jing-mei, HUANG Le .Review of unordered picking technology for robots[J].Packaging Engineering,2022,43(8):66-75. | |

| 2 | 王高,陈晓鸿,柳宁,等 .一种基于视角选择经验增强算法的机器人抓取策略[J].华南理工大学学报(自然科学版),2022,50(9):126-137. |

| WANG Gao, CHEN Xiaohong, LIU Ning,et al .A robot grasping policy based on viewpoint selection experience enhancement algorithm[J].Journal of South China University of Technology (Natural Science Edition),2022,50(9):126-137. | |

| 3 | HINTERSTOISSER S, LEPETIT V, ILIC S,et al .Model based training,detection and pose estimation of texture-less 3D objects in heavily cluttered scenes[C]∥Proceedings of the 11th Asian Conference on Computer Vision.Berlin/Heidelberg:Springer,2013:548-562. |

| 4 | DROST B, ULRICH M, NAVAB N,et al .Model globally,match locally:efficient and robust 3D object recognition[C]∥ Proceedings of 2010 IEEE Computer Society Conference on Computer Vision and Pattern Recognition.San Francisco:IEEE,2010:998-1005. |

| 5 | PENG S, LIU Y, HUANG Q,et al .PVNet:pixel-wise voting network for 6DoF pose estimation[C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:4561-4570. |

| 6 | HE Y, SUN W, HUANG H,et al .PVN3D:a deep point-wise 3D keypoints voting network for 6DoF pose estimation[C]∥ Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE,2020:11632-11641. |

| 7 | XIANG Y, SCHMIDT T, NARAYANAN V,et al .PoseCNN:a convolutional neural network for 6D object pose estimation in cluttered scenes[EB/OL].(2017-11-01)[2023-04-22].. |

| 8 | WANG C, XU D, ZHU Y,et al .DenseFusion:6D object pose estimation by iterative dense fusion[C]∥Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:3343-3352. |

| 9 | MO N, GAN W, YOKOYA N,et al .E S6D:a computation efficient and symmetry-aware 6D pose regression framework[C]∥ Proceedings of 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition.New Orleans:IEEE,2022:6718-6727. |

| 10 | HAGELSKJ?R F, BUCH A G .Bridging the reality gap for pose estimation networks using sensor-based domain randomization[C]∥ Proceedings of 2021 IEEE/CVF International Conference on Computer Vision Workshops.Montreal:IEEE,2021:935-944. |

| 11 | RAMBACH J, DENG C, PAGANI A,et al .Learning 6DoF object poses from synthetic single channel images[C]∥ Proceedings of 2018 IEEE International Symposium on Mixed and Augmented Reality Adjunct.Munich:IEEE,2018:164-169. |

| 12 | HINTERSTOISSER S, PAULY O, HEIBEL H,et al .An annotation saved is an annotation earned:using fully synthetic training for object detection[C]∥ Proceedings of 2019 IEEE/CVF International Conference on Computer Vision Workshops.Seoul:IEEE,2019:2787-2796. |

| 13 | NOGUES F C, HUIE A, DASGUPTA S .Object detection using domain randomization and generative adversarial refinement of synthetic images[EB/OL].(2018-05-30)[2023-05-05].. |

| 14 | DENNINGER M, SUNDERMEYER M, WINKELBAUER D,et al .BlenderProc[EB/OL].(2019-10-25)[2023-04-12].. |

| 15 | ZHU J Y, PARK T, ISOLA P,et al .Unpaired image-to-image translation using cycle-consistent adversarial networks[C]∥ Proceedings of 2017 IEEE International Conference on Computer Vision.Venice:IEEE,2017:2223-2232. |

| 16 | WANG C Y, BOCHKOVSKIY A, LIAO H Y M .YOLOv7:trainable bag-of-freebies sets new state-of-the-art for real-time object detectors[C]∥ Proceedings of 2023 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Vancouver:IEEE,2023:7464-7475. |

| 17 | GONZALEZ A .Measurement of areas on a sphere using Fibonacci and latitude-longitude lattices[J].Mathematical Geosciences,2010,42(1):49-64. |

| 18 | HODA? T, MATAS J, OBDR?áLEK ? .On evaluation of 6D object pose estimation[C]∥ Proceedings of Computer Vision-ECCV 2016 Workshops.Amsterdam:Springer,2016:606-619. |

/

| 〈 |

|

〉 |