收稿日期: 2024-04-03

网络出版日期: 2024-10-08

基金资助

广东省重点领域研发计划项目(2022B0101070001);华南理工大学中央高校基本科研业务费专项资金资助项目(Z2kjD2220210)

Image Inpainting Algorithm Based on Hybrid Encoding and Mask Space Modulation

Received date: 2024-04-03

Online published: 2024-10-08

Supported by

the Key-Areas R & D Program of Guangdong Province(2022B0101070001)

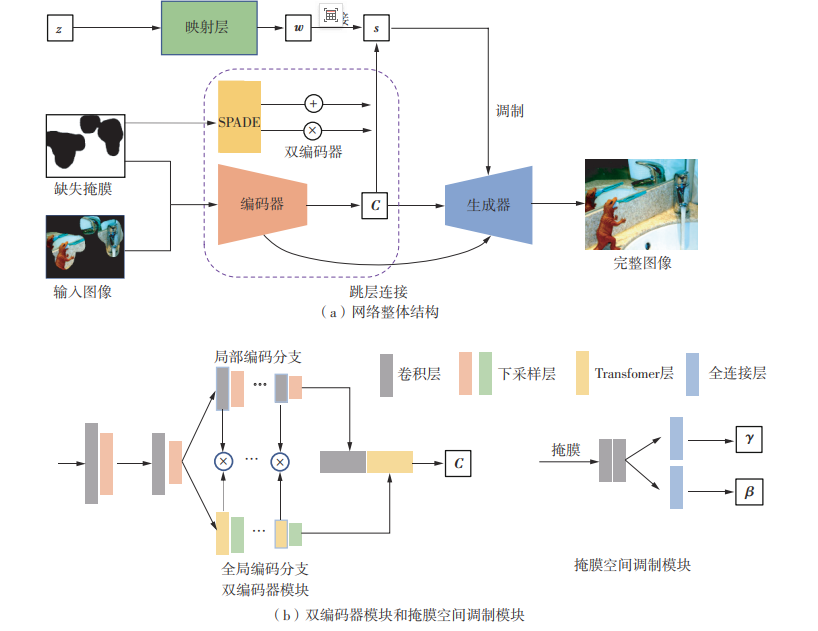

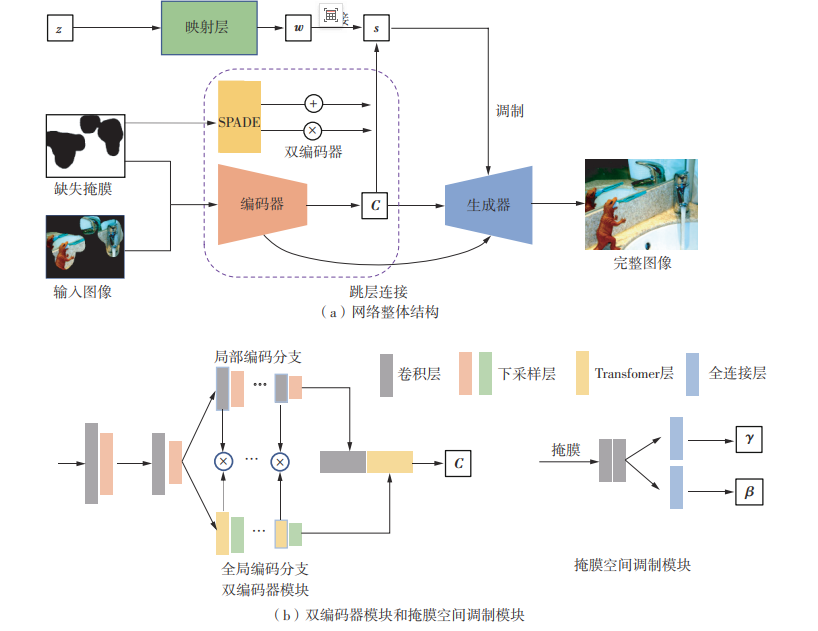

图像补全是指对图像缺失区域进行内容填充,是计算机视觉和图像处理研究的重要问题之一。当前图像补全算法的研究已经取得了很大的进展,但如果图像中的场景比较复杂且存在大面积的缺失区域时,现有算法由于缺乏有效的网络结构来捕捉图像的长距离依赖和高级语义信息,仍然较难生成高质量的完整图像。针对大范围缺失的图像补全问题,为扩大图像补全网络有限的感受野,有效地获取图像可见区域的全局信息,同时充分利用图像可见区域的有效信息,该文提出了一种基于混合编码和掩膜空间调制的图像补全算法。该算法首先通过混合编码网络对图像可见区域进行局部和全局信息的特征提取;然后采用掩膜空间调制模块,根据缺失面积的大小动态调整在生成缺失区域时的多样性;最后基于StyleGAN2的方法生成完整图像。实验结果表明,该文提出的算法能够有效地处理大范围缺失的图像,可生成具有多样性的高质量图像,并且能应用在视觉显著性模型的数据增强上。

冼进 , 徐小茹 , 冼允廷 , 冼楚华 . 基于混合编码和掩膜空间调制的图像补全算法[J]. 华南理工大学学报(自然科学版), 2025 , 53(3) : 31 -39 . DOI: 10.12141/j.issn.1000-565X.240155

Image inpainting refers to the process of filling in missing regions of an image with plausible content, which is one of the significant issues in the fields of computer vision and image processing research. Current research on image inpainting algorithms has made substantial progress. However, when dealing with complex images with large missing areas, existing algorithms still face challenges in generating high-quality complete images due to the lack of effective network structures to capture long-range dependencies and high-level semantic information in the images. To address the issue of large-scale missing image inpainting, this paper proposed an image inpainting algorithm based on hybrid encoding and mask spatial modulation. The aim is to expand the limited receptive field of image inpainting networks, effectively obtain global information from the visible regions of the image, and fully utilize the effective information from the visible regions. Firstly, a hybrid encoding network was used to extract local and global information features from the visible regions of the image. Then, a mask spatial modulation module dynamically adjusted the diversity in generating missing regions based on the size of the missing area. Finally, a method based on StyleGAN2 was used to generate complete images. Experimental results show that the proposed algorithm can effectively handle images with large-scale missing areas, generating high-quality images with diversity, and can be applied to data augmentation in visual saliency models.

| 1 | XIE X, PAN X, ZHANG W,et al .A context hierarchical integrated network for medical image segmentation[J].Computers and Electrical Engineering,2022,101:108029/1-14. |

| 2 | ALHAYANI B S A, HAMID N, ALMUKHTAR F H,et al .Optimized video internet of things using elliptic curve cryptography based encryption and decryption[J].Computers and Electrical Engineering,2022,101:108022/1-10. |

| 3 | MA Y, ZHAI Y, WANG R .DeepFGS:fine-grained scalable coding for learned image compression[EB/OL].(2022-01-04)[2024-01-02].. |

| 4 | GOODFELLOW I, POUGET-ABADIE J, MIRZA M,et al .Generative adversarial networks[J].Communications of the ACM,2020,63(11):139-144. |

| 5 | PATHAK D, KR?HENBüHL P, DONHUE J,et al .Context encoders:feature learning by inpainting[C]∥Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:2536-2544. |

| 6 | YAN Z, LI X, LI M,et al .Shift-Net:image inpain-ting via deep feature rearrangement[C]∥ Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:3-19. |

| 7 | IIZUKA S, SIMO-SERRA E, ISHIKAWA H .Globally and locally consistent image completion[J].ACM Transactions on Graphics,2017,36(4):107/1-14. |

| 8 | DEMIR U, UNAL G .Patch-based image inpainting with generative adversarial networks[EB/OL].(2023-03-20)[2023-12-25].. |

| 9 | YU J, LIN Z, YANG J,et al .Generative image inpain-ting with contextual attention[C]∥ Proceedings of 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:5505-5514. |

| 10 | YANG C, LU X, LIN Z,et al .High-resolution image inpainting using multi-scale neural patch synthesis[C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:4076-4084. |

| 11 | YU J, LIN Z, YANG J,et al .Free-form image inpainting with gated convolution[C]∥ Proceedings of 2019 IEEE/CVF International Conference on Computer Vision.Seoul:IEEE,2019:4470-4479. |

| 12 | LIU H, WAN Z, HUANG W,et al .PD-GAN:probabilistic diverse GAN for image inpainting[C]∥Proceedings of 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Nashville:IEEE,2021:9367-9376. |

| 13 | ZHAO S, CUI J, SHENG Y,et al .Large scale image completion via co-modulated generative adversarial networks[EB/OL]. (2021-03-18)[2023-12-25].. |

| 14 | KARRAS T, LAINE S, AITTALA M,et al .Analy-zing and improving the image quality of StyleGAN[C]∥ Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE,2020:8107-8116. |

| 15 | ZHENG H, LIN Z, LU J,et al .Image inpainting with cascaded modulation GAN and object-aware training[C]∥ Proceedings of the 17th European Conference on Computer Vision.Tel Aviv:Springer,2022:277-296. |

| 16 | SUVOROV R, LOGACHEVA E, MASHIKHIN A,et al .Resolution-robust large mask inpainting with Fourier convolutions[C]∥ Proceedings of 2022 IEEE/CVF Winter Conference on Applications of Computer Vision.Waikoloa:IEEE,2022:3172-3182. |

| 17 | KIM J, KIM W,OH H,et al .Progressive contextual aggregation empowered by pixel-wise confidence scoring for image inpainting[J].IEEE Transactions on Image Processing,2023,32:1200-1214. |

| 18 | ZHENG H, ZHANG Z, WANG Y,et al .GCM-Net:towards effective global context modeling for image inpainting[C]∥ Proceedings of the 29th ACM International Conference on Multimedia.New York:ACM,2021:2586-2594. |

| 19 | ZHENG H, ZHANG Z, ZHANG H,et al .Deep multi-resolution mutual learning for image inpainting[C]∥ Proceedings of the 30th ACM International Conference on Multimedia.Lisboa:ACM,2022:6359-6367. |

| 20 | FENG L, ZHU C, LONG Z,et al .Multiplex transformed tensor decomposition for multidimensional image recovery[J].IEEE Transactions on Image Processing,2023,32:3397-3412. |

| 21 | SIMONYAN K, ZISSERMAN A .Very deep convolutional networks for large-scale image recognition[EB/OL]. (2015-04-10)[2023-12-25].. |

| 22 | XIE S, GIRSHICK R, DOLLáR P,et al .Aggregated residual transformations for deep neural networks[C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:5987-5995. |

| 23 | HUANG G, LIU Z, VAN DER MAATEN L, et al .Densely connected convolutional networks[C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:2261-2269. |

| 24 | KARRAS T, LAINE S, AILA T .A style-based gene-rator architecture for generative adversarial networks[C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:4396-4405. |

| 25 | HUANG X, BELONGIE S .Arbitrary style transfer in real-time with adaptive instance normalization[C]∥Proceedings of 2017 IEEE International Conference on Computer Vision.Venice:IEEE,2017:1510-1519. |

| 26 | JIANG M, HUANG S, DUAN J,et al .SALICON:saliency in context[C]∥ Proceedings of 2015 IEEE Conference on Computer Vision and Pattern Recognition.Boston:IEEE,2015:1072-1080. |

| 27 | SZEGEDY C, VANHOUCE V, IOFFE S,et al .Rethinking the inception architecture for computer vision[C]∥ Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:2818-2826. |

| 28 | ISOLA P, ZHU J Y, ZHOU T,et al .Image-to-image translation with conditional adversarial networks[C]∥Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:5967-5976. |

| 29 | LIU G, REDA F A, SHIH K J,et al .Image inpain-ting for irregular holes using partial convolutions[C]∥Proceedings of the 15th European Conference on Computer Vision.Munich:Springer,2018:89-105. |

/

| 〈 |

|

〉 |