收稿日期: 2024-05-09

网络出版日期: 2024-08-23

基金资助

国家自然科学基金项目(62173107);国家车辆事故深度调查体系项目(NAIS-ZL-ZHGL-2020018);黑龙江省重点研发计划项目(JD22A014)

Foggy Road Environment Perception Algorithm Based on an Improved CycleGAN and YOLOv8

Received date: 2024-05-09

Online published: 2024-08-23

Supported by

the National Natural Science Foundation of China(62173107);the National Automobile Accident In-Depth Investigation System Funding Project(NAIS-ZL-ZHGL-2020018);the Key R & D Program of Heilongjiang Province(JD22A014)

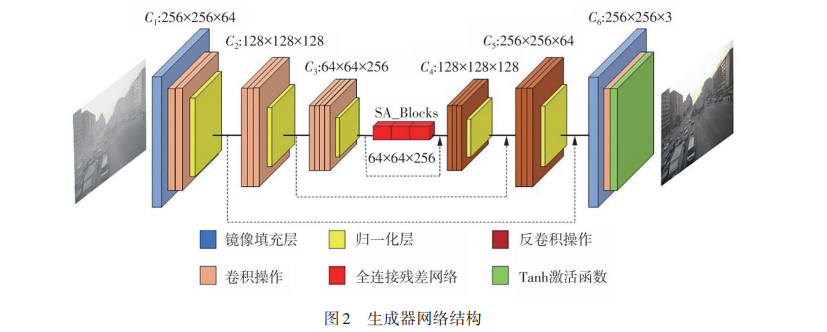

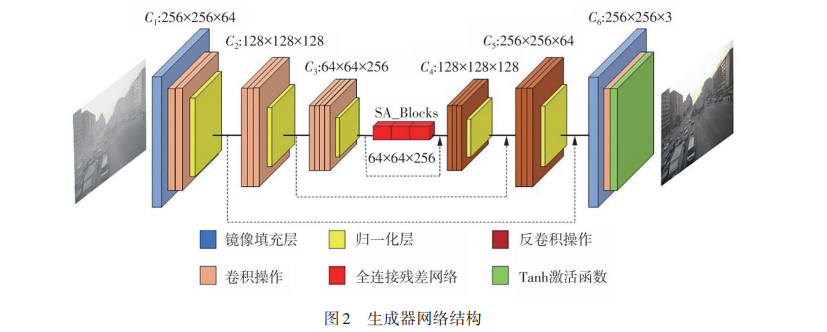

针对极端雾霾天气条件下,智能车辆对道路环境感知识别精度降低的问题,提出了基于改进的CycleGAN和YOLOv8联合雾天环境感知算法。首先以CycleGAN算法为框架对图像进行去雾预处理,在生成器网络中引入自注意力机制提高网络的特征提取能力,同时为了减少与真实图像的色彩差异,引入自正则化颜色损失函数;其次,在目标检测部分,首先采用轻量化的GhostConv网络替换原主干网络,以降低计算量;而后,在颈部网络加入了GAM注意力机制,有效提高了网络对于全局信息的交互能力;最后,通过WIoU损失函数,减小低质样本所产生的有害梯度,提高模型的收敛速度。应用RESIDE数据集和BDD100k数据集对该算法进行实验验证。结果表明:去雾后图像与原图像的结构相似度为85%,相较于原CycleGAN算法和AODNet算法的峰值信噪比(PSNR)和结构相似性(SSIM)分别提高2.24 dB和15.4个百分点、2.5 dB和36.3个百分点。其中,改进的YOLOv8算法与原算法相比,其精确率、召回率和平均检测精度均值分别提升了2.5、1.8和1.1个百分点。实验结果验证了所提出算法的召回率和检测精度等方面优于传统算法,具有一定的实用价值。

岳永恒 , 雷文朋 . 基于改进的CycleGAN和YOLOv8联合雾天道路环境感知算法[J]. 华南理工大学学报(自然科学版), 2025 , 53(2) : 48 -57 . DOI: 10.12141/j.issn.1000-565X.240225

In response to the issue of reduced road environment perception accuracy for intelligent vehicles under extreme haze conditions, this paper proposed a joint haze environment perception algorithm based on an improved CycleGAN and YOLOv8. Firstly, the CycleGAN algorithm was used as the framework for image defogging preprocessing. A self-attention mechanism was incorporated into the generator network to enhance the network’s feature extraction capability. Additionally, to minimize color discrepancies with real images, a self-regularized color loss function was introduced. Secondly, in the object detection phase, the lightweight GhostConv network was first used to replace the original backbone network, reducing computational complexity. Furthermore, the GAM attention mechanism was added to the neck network to effectively improve the network’s ability to interact with global information. Finally, the WIoU loss function was used to mitigate harmful gradients caused by low-quality samples, improving the model’s convergence speed. Experiments conducted on the RESIDE and BDD100k datasets validate the proposed algorithm. Results show that the structural similarity between dehazed and original images is 85%. Compared to the original CycleGAN algorithm and the AODNet algorithm, the proposed approach improves the peak signal-to-noise ratio (PSNR) by 2.24 dB and 2.5 dB, respectively, and the structural similarity index (SSIM) by 15.4% and 36.3%, respectively. Additionally, the improved YOLOv8 algorithm demonstrates enhancements over the original algorithm, with precision, recall, and mean average precision (mAP) increasing by 2.5%, 1.8%, and 1.1%, respectively. The experimental results confirm that the proposed algorithm outperforms traditional algorithms in terms of recall and detection accuracy, demonstrating its practical value

| 1 | 彭湃,耿可可,王子威 .智能汽车环境感知方法综述[J].机械工程学报,2023,59(20):281-303. |

| PENG Pai, GENG Keke, WANG Ziwei .Overview of environmental perception methods for intelligent vehicles [J]Journal of Mechanical Engineering,2023,59(20):281-303. | |

| 2 | 赖镜安,陈紫强,孙宗威,等 .基于YOLOv5的轻量级雾天目标检测方法[J].计算机工程与应用,2024,60(6):78-88. |

| LAI Jing’an, CHEN Ziqiang, SUN Zongwei,et al .Lightweight foggy target detection method based on YOLOv5[J].Computer Engineering and Applications,2024,60(6):78-88. | |

| 3 | CHOW T Y, LEE K H, CHAN K L .Detection of targets in road scene images enhanced using conditional GAN-based dehazing model[J].Applied Sciences,2023,13(9):5326. |

| 4 | LI B, PENG X, WANG Z,et al .An all-in-one network for dehazing and beyond[J].arXiv Preprint arXiv:1707.06543,2017. |

| 5 | ZHANG X, DONG H, HU Z,et al .Gated fusion network for degraded image super resolution[J].International Journal of Computer Vision,2020,128:1699-1721. |

| 6 | ENGIN D, GEN? A, KEMAL E H .Cycle-dehaze:Enhanced cyclegan for single image dehazing[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops.Salt Lake City :IEEE,2018:825-833. |

| 7 | YAN B, YANG Z, SUN H,et al .ADE-CycleGAN:a detail enhanced image dehazing CycleGAN network[J].Sensors,2023,23(6):3294. |

| 8 | GIRSHICK R, DONAHUE J, DARRELL T,et al .Rich feature hierarchies for accurate object detection and semantic segmentation[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Columbus:IEEE, 2014:580-587. |

| 9 | REN S, HE K, GIRSHICK R,et al .Faster r-cnn:towards real-time object detection with region proposal networks[J].Advances in Neural Information Processing Systems,2017,39(6):1137-1149. |

| 10 | REDMON J, DIVVALA S, GIRSHICK R,et al .You only look once:unified,real-time object detection[C]∥Proceedings of the IEEE conference on Computer Vision and Pattern Recognition.Las Ve-gas:IEEE,2016:779-788. |

| 11 | HAN K, WANG Y, TIAN Q,et al .Ghostnet:more features from cheap operations[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE,2020:1580-1589. |

| 12 | WU T, KU T, ZHANG H. Research for image caption based on global attention mechanism[J]∥Second Target Recognition and Artificial Intelligence Summit Forum,2020,11427:679-684. |

| 13 | TONG Z, CHEN Y, XU Z,et al .Wise-IoU:bounding box regression loss with dynamic focusing mechanism[J].arXiv Preprint arXiv:2301.10051,2023. |

| 14 | ZHENG Z, WANG P, LIU W,et al .Distance-IoU loss:faster and better learning for bounding box regression[C]∥Proceedings of the AAAI conference on artificial intelligence.New York:IEEE,2020:12993-13000. |

| 15 | GOODFELLOW I, POUGET-ABADIE J, MIRZA M,et al .Generative adversarial nets[J].Advances in Neural Information Processing Systems,2014,27:139-144. |

| 16 | WANG C, MENG Z, XIE R,et al .A single image dehazing algorithm based on cycle-gan[C]∥Proceedings of the 2019 International Conference on Robotics,Intelligent Control and Artificial Intelligence.Long Beach:IEEE,2019:247-251. |

| 17 | VASWANI A, SHAZEER N, PARMAR N,et al .Attention is all you need[J].Advances in Neural Information Processing Systems,2017,30:6000-6010. |

| 18 | HE K, ZHANG X, REN S,et al .Spatial pyramid pooling in deep convolutional networks for visual recognition[J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2015,37(9):1904-1916. |

| 19 | LIU S, QI L, QIN H,et al .Path aggregation network for instance segmentation[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Salt Lake City:IEEE,2018:8759-8768. |

| 20 | LI B, REN W, FU D,et al .Benchmarking single-image dehazing and beyond[J].IEEE Transactions on Image Processing,2018,28(1):492-505. |

| 21 | YU F, CHEN H, WANG X,et al .Bdd100k:a diverse driving dataset for heterogeneous multitask learning[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE, 2020:2636-2645. |

| 22 | WEI C, WANG W, YANG W,et al .Deep retinex decomposition for low-light enhancement[J].arXiv Preprint arXiv:1808.04560,2018. |

| 23 | LIU W, ANGUELOV D, ERHAN D,et al .Ssd:single shot multibox detector[C]∥ Proceeding of the Computer Vision-ECCV 2016:14th European Conference.Amsterdam:Springer International Publishing,2016:21-37. |

/

| 〈 |

|

〉 |