收稿日期: 2023-04-22

网络出版日期: 2023-10-24

基金资助

国家自然科学基金资助项目(51975219)

Visual SLAM Algorithm Based on Memory Parking Scene

Received date: 2023-04-22

Online published: 2023-10-24

Supported by

the National Natural Science Foundation of China(51975219)

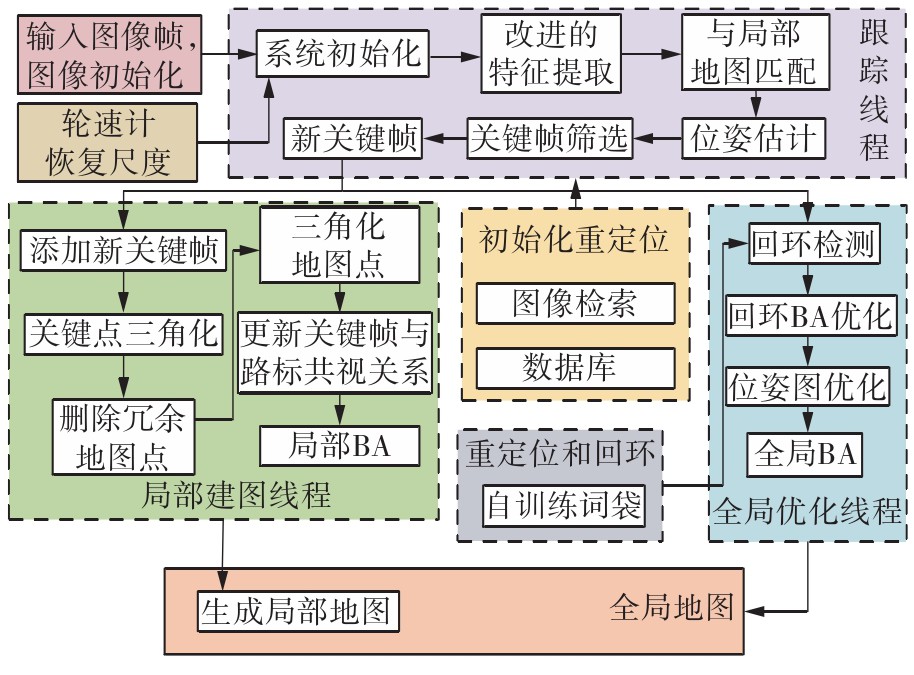

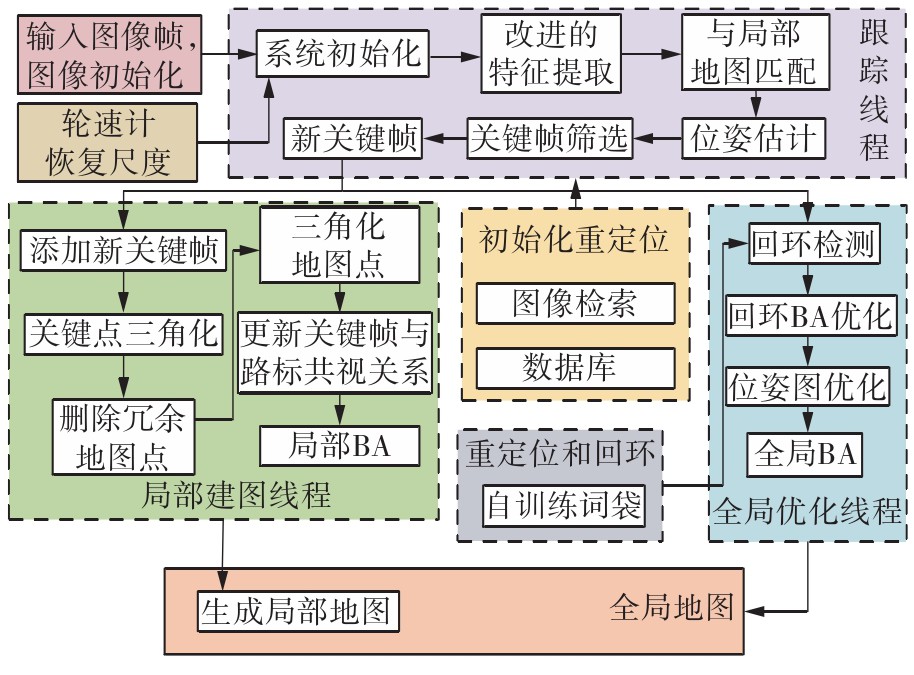

随着自动驾驶技术的发展,视觉同步建图与定位(SLAM)技术受到越来越多的关注。在记忆泊车场景中,需要对停车场场景建立先验地图,待汽车再次驶入相同的停车场时,使用视觉SLAM进行场景建图与定位。为使SLAM所建地图的鲁棒性更好、精度和效率更高,文中首先使用轻量化的深度学习算法改善传统特征提取算法在不同场景下鲁棒性较差的不足,用深度可分离卷积代替普通卷积结构,从而大大提升了特征提取效率;接着基于ResNet网络改进Patch-NetVLAD算法,并在MSLS数据集上对改进的残差网络和原始VGG网络进行重新训练,使用图像检索进行粗定位,挑选出候选图像帧,再通过精定位求解相机位姿,完成全局初始化的重定位;在此基础上,使用改进后的词袋算法重新训练不同停车场场景下的图像,将所有算法移植到OpenVSLAM架构中完成实际场景的建图与定位。实验结果表明,文中设计的视觉SLAM系统能够完成地上停车场、地下停车场以及室外半封闭园区道路等多场景的建图,平均纵向定位误差为8.42 cm,平均横向定位误差为8.30 cm,均达到工程要求。

胡习之 , 崔博非 , 王琴 , 刘鸿 . 基于记忆泊车场景的视觉SLAM算法[J]. 华南理工大学学报(自然科学版), 2024 , 52(6) : 1 -11 . DOI: 10.12141/j.issn.1000-565X.230262

With the development of autonomous driving technology, visual simultaneous localization and mapping (SLAM) technology has attracted more and more attention. In the memory parking scene, it is necessary to establish a prior map of the parking lot scene. Thus, when the car enters the same parking lot again, visual SLAM can help to construct and locate the scene. In order to improve the robustness, accuracy and efficiency of the map built by SLAM, first, a lightweight deep learning algorithm is used to improve the poor robustness of the traditional feature extraction algorithms in different scenarios, and the deep separable convolution is adopted to replace the previous common convolution structure, which greatly improves the time efficiency of feature extraction. Next, the Patch-NetVLAD algorithm is improved based on ResNet network, and the improved residual network as well as the original VGG network is retrained on MSLS data set. Then, image retrieval is used for rough positioning, candidate image frames are selected, and camera pose is solved by fine positioning to complete global initialization relocation. On this basis, the improved bag of words algorithm is used to retrain the images in different parking lot scenes, and all the algorithms are transplanted into the OpenVSLAM architecture to complete the mapping and positioning of the actual scene. The experimental results show that the proposed visual SLAM system can complete the construction of many scenes such as aboveground, underground and semienclosed parking lots, with an average longitudinal positioning error of 8.42 cm and an average horizontal positioning error of 8.30 cm, both of which meet the engineering requirements.

| 1 | DAVISON A J, REID I D, MOLTON N D,et al .MonoSLAM:real-time single camera SLAM[J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2007,29(6):1052-1067. |

| 2 | 田超然 .面向视觉SLAM的联合特征匹配和跟踪算法研究[D].深圳:中国科学院深圳先进技术研究院,2020. |

| 3 | KONDA K, MEMISEVIC R .Learning visual odometry with a convolutional network[C]∥Proceedings of the 10th International Conference on Computer Vision Theory and Applications.[S. l.]:SciTePress,2015:486-490. |

| 4 | COSTANTE G, MANCINI M, VALIGI P,et al .Exploring representation learning with CNNs for frame-to-frame ego-motion estimation[J].IEEE Robotics and Automation Letters,2016,1(1):18-25. |

| 5 | ULLMAN S .The interpretation of structure from motion[J].Proceedings of the Royal Society of London,Series B,Biological Sciences,1979,203(1153):405-426. |

| 6 | ZHOU H, UMMENHOFER B, BROX T .DeepTAM:deep tracking and mapping[C]∥Proceedings of the 2018 European Conference on Computer Vision.[S. l.]:[s. n.],2018:822-838. |

| 7 | NEWCOMBE R A, LOVEGROVE S J, DAVISON A J .DTAM:dense tracking and mapping in real-time[C]∥Proceedings of 2011 International Conference on Computer Vision.[S. l.]:IEEE,2011:2320-2327. |

| 8 | HANDA A, BLOESCH M, P?TR?UCEAN V,et al .gvnn:neural network library for geometric computer vision[C]∥Proceedings of Computer Vision—ECCV 2016 Workshops.Amsterdam:Springer International Publishing,2016:67-82. |

| 9 | WANG S, CLARK R, WEN H,et al .Deepvo:towards end-to-end visual odometry with deep recurrent convolutional neural networks[C]∥Proceedings of 2017 IEEE International Conference on Robotics and Automation.[S. l.]:IEEE,2017:2043-2050. |

| 10 | TANG J, FOLKESSON J, JENSFELT P .Geometric correspondence network for camera motion estimation[J].IEEE Robotics & Automation Letters,2018,3(2):1010-1017. |

| 11 | 兰凤崇,李继文,陈吉清 .面向动态场景复合深度学习与并行计算的DG-SLAM算法[J].吉林大学学报(工学版),2021,51(4):1437-1446. |

| LAN Feng-chong, LI Ji-wen, CHEN Ji-qing .DG-SLAM algorithm for dynamic scene compound deep learning and parallel computing[J].Journal of Jilin University (Engineering and Technology Edition),2021,51(4):1437-1446. | |

| 12 | 阮晓钢,郭佩远,黄静 .动态场景下基于深度学习的语义视觉SLAM[J].北京工业大学学报,2022,48(1):16-23. |

| RUAN Xiaogang, GUO Peiyuan, HUANG Jing .Semantic visual SLAM based on deep learning in dynamic scenes[J].Journal of Beijing University of Technology,2022,48(1):16-23. | |

| 13 | DETONE D, MALISIEWICZ T, RABINOVICH A .Superpoint:self-supervised interest point detection and description[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition Workshops.[S. l.]:IEEE,2018:224-236. |

| 14 | SCHMID C, MOHR R, BAUCKHAGE C .Evaluation of interest point detectors[J].International Journal of Computer Vision,2000,37(2):151-172. |

| 15 | GEIGER A, LENZ P, STILLER C,et al .Vision meets robotics:the kitti dataset[J].The International Journal of Robotics Research,2013,32(11):1231-1237. |

| 16 | STURM J, ENGELHARD N, ENDRES F,et al .A benchmark for the evaluation of RGB-D SLAM systems[C]∥Proceedings of the 2012 IEEE/RSJ International Conference on Intelligent Robots and Systems.[S. l.]:IEEE,2012:573-580. |

/

| 〈 |

|

〉 |