收稿日期: 2023-03-28

网络出版日期: 2023-06-21

基金资助

广东省基础与应用基础研究基金(2020A1515111024)

Repositioning Strategy for Ride-Hailing Vehicles Based on Geometric Road Network Structure and Reinforcement Learning

Received date: 2023-03-28

Online published: 2023-06-21

Supported by

the Basic and Applied Research Foundation of Guangdong Province(2020A1515111024)

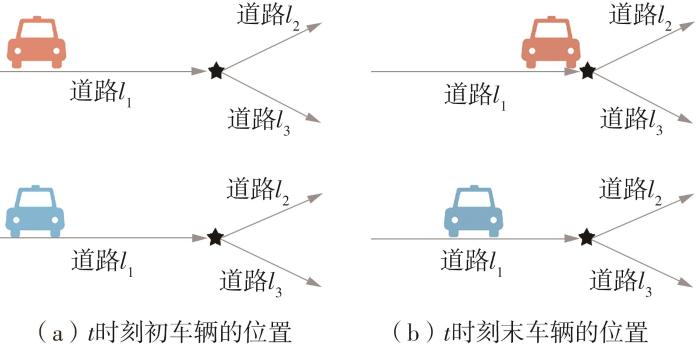

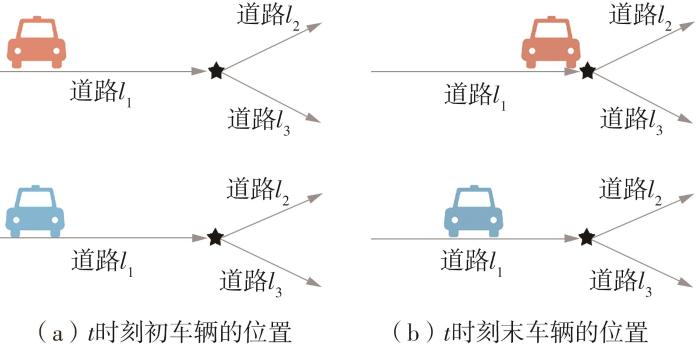

网约车司机和乘客双向搜索效率低、准确性差,造成了需求与供应之间的不匹配。网约车重定位策略将车辆提前调度到未来有需求的地区,提高了供需匹配度。现有的研究大多以网络栅格表示城市道路环境,缺少几何拓扑信息,影响了调度准确性。针对这一现象,提出一种基于图神经网络(GNN)和执行者-评论者强化学习算法(A2C)的网约车重定位算法GA2C。该算法学习过程更平稳且能够高维采样,适用于海量网约车进行多智能体最佳重定位策略的学习,并且使用几何路网结构表示城市道路环境,可以将GNN作为函数逼近器学习路网几何信息,此外,引入基于动作价值函数的动作采样策略,增加了动作选择的随机性,从而有效防止竞争。基于Python构建的网约车重定位仿真实验结果如下:GA2C算法的订单响应率为84.2%,显著高于所有对比实验结果;在订单分布对比实验结果中,GA2C在均匀分布、中心状布局、块状布局和棋盘状布局所对应的相对提升分别为1.17%、6.02%、13.12%和14.55%。上述实验结果表明,GA2C算法能够有效对网约车进行重定位,当订单分布呈现明显差异性,且不同需求区域之间距离较近时,能够更好的学习动态需求变化,通过重定位网约车获得最大订单响应率。

许伦辉, 余佳芯, 裴明阳, 等 . 基于几何路网结构和强化学习的车辆重定位策略[J]. 华南理工大学学报(自然科学版), 2023 , 51(10) : 99 -109 . DOI: 10.12141/j.issn.1000-565X.230148

The inefficient and inaccurate bidirectional search by both ride-hailing drivers and passengers leads to a mismatch between supply and demand. Ride-hailing vehicle repositioning strategy can pre-dispatch vehicles to areas with future demand, improving supply-demand matching. However, existing research mostly uses network grids to represent the urban road environment, lacking geometric topological information and reducing the dispatch accuracy. To address this issue, a ride-hailing vehicle relocation algorithm called GA2C was proposed based on Graph Neural Networks (GNN) and Actor-Critic reinforcement learning algorithm. This algorithm has a smoother learning process and can perform high-dimensional sampling, and it is suitable for learning the best relocation strategy for a large number of ride-hailing vehicles as multi-agent systems. Moreover, the geometric network structure was used to represent the urban road environment by using a GNN as a function approximator to learn the geometric information of the road network. Additionally, an action sampling strategy based on action value function was introduced to increase the randomness of action selection, effectively preventing competition. A ride-hailing vehicle relocation simulation experiment was conducted using Python, and the results are as follows: (i) the order response rate of the GA2C algorithm is 84.2%, significantly higher than all the comparative experimental results; (ii) in the order distribution comparative experiment, GA2C’s relative improvements in uniform distribution, central distribution layout, block distribution layout, and checkerboard distribution layout are 1.17%, 6.02%, 13.12%, and 14.55%, respectively. The above experimental results demonstrate that the GA2C algorithm can effectively relocate ride-hailing vehicles. When the order distribution presents significant differences, and the distance between different demand areas is relatively close, it can better learn dynamic demand changes, and achieve maximum order response rate by relocating ride-hailing vehicles.

| 1 | XU Z T, YIN Y F, CHAO X L,et al .A generalized fluid model of ride-hailing systems[J].Transportation Research Part B:Methodological,2021,150:587-605. |

| 2 | CHENG S F, JHA S S, RAJENDRAM R .Taxis strike back:a field trial of the driver guidance system[C]∥Proceedings of the 17th International Conference on Autonomous Agents and Multi-Agent Systems.Richland:ACM,2018:577-584. |

| 3 | LEI D C, WU Y S .Order-dispatching strategy induced by optimal transport plan for an online ride-hailing system[J].Transportation Research Record,2022,2676:156-169. |

| 4 | ODA T, CARLEE J W .MOVI:a model-free approach to dynamic fleet management[C]∥Proceedings of the IEEE INFOCOM 2018-IEEE Conference on Computer Communications.New York:IEEE,2018:2708-2716. |

| 5 | 温惠英,林译峰,吴昊书,等 .基于城市道路交通环境演变的ECEA路径规划算法[J].华南理工大学学报(自然科学版),2021,49(10):1-10. |

| WEN Huiying, LIN Yifeng, WU Haoshu,et al .Extended co-evolutionary algorithm for path planning based on the urban traffic environment evolution[J].Journal of South China University of Technology (Natural Science Edition),2021,49(10):1-10. | |

| 6 | XU J, ROUHOLLAH R, LADISLAU B,et al .A taxi dispatch system based on prediction of demand and destination[J].Journal of Parallel and Distributed Computing,2021,157(11):269-279. |

| 7 | ZHANG L Y, TAO H, MIN Y,et al .A taxi order dispatch model based on combinatorial optimization[C]∥Proceedings of the 23rd ACM SIGKDD International Conference on Knowledge Discovery and Data Mining.New York:ACM,2017:2151-2159. |

| 8 | 罗瑞发,郝慧君,徐桃让,等 .考虑时延的网联车混合交通流基本图模型[J].华南理工大学学报(自然科学版),2023,51(1):106-113. |

| LUO Ruifa, HAO Huijun, XU Taorang,et al .Fundamental diagram model of mixed traffic flow of connected vehicles considering time delay[J].Journal of South China University of Technology(Natural Science Edition),2023,51(1):106-113. | |

| 9 | FEI M, HAN S, LIN S,et al .Data-driven robust taxi dispatch under demand uncertainties[J].IEEE Transactions on Control Systems Technology,2019,27(1):175-191. |

| 10 | SUTTON R, BARTO A .Reinforcement learning:an introduction[J].IEEE Transactions on Neural Networks,2015,16:285-286. |

| 11 | WEN J, ZHAO J, JAILLET P .Rebalancing shared mobility-on-demand systems:a reinforcement learning approach[C]∥2017 IEEE 20th International Conference on Intelligent Transportation Systems (ITSC).Yokohama:IEEE,2017:220-225. |

| 12 | LIU Z D, LI J Z, WU K S .Context-aware taxi dispatching at city-scale using deep reinforcement learning[J].IEEE Transactions on Intelligent Transportation Systems,2022,23:1996-2009. |

| 13 | RONG H G, ZHOU X, YANG C,et al .The rich and the poor:a Markov decision process approach to optimizing taxi driver revenue efficiency[C]∥Proceedings of the 25th ACM International on Conference on Information and Knowledge Management.New York:ACM,2016:2329-2334. |

| 14 | FENG J K, GLUZMAN M O, DAI J G .Scalable deep reinforcement learning for ride-hailing[J].IEEE Control Systems Letters,2021,5:2060-2065. |

| 15 | LIU Z D, LI J Z and WU K .Context-aware taxi dispatching at city-scale using deep reinforcement learning[J].IEEE Transactions on Intelligent Transportation Systems,2020,23(3):1996-2009. |

| 16 | LIN K X, ZHAO R Y, XU Z,et al .Efficient large-scale fleet management via multi-agent deep reinforcement learning[C]∥Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining.New York:ACM,2018:1774-1783. |

| 17 | SHOU Z Y, DI X, YE J P,et al .Optimal passenger-seeking policies on e-hailing platforms using aarkov decision process and imitation learning[J].Transportation Research Part C:Emerging Technologies,2020,111(2):91-113. |

| 18 | 王福建,程慧玲,马东方,等 .基于深度逆向强化学习的城市车辆路径链重构[J].华南理工大学学报(自然科学版),2023,51(7):120-128. |

| WANG Fujian, CHENG Huiling, MA Dongfang,et al .Urban vehicle path chain reconstruction based on deep inverse reinforcement learning[J].Journal of South China University of Technology (Natural Science Edition),2023,51(7):120-128. | |

| 19 | ZHOU M, JIN J R, ZHANG W N,et al .Multi-agent reinforcement learning for order-dispatching via order-vehicle distribution matching[C]∥Proceedings of the 28th ACM International Conference on Information and Knowledge Management.New York:ACM,2019:2645-2653. |

| 20 | TAN M .Multi-agent reinforcement learning:independent vs.cooperative agents[C]∥Proceedings of the 10th International Conference on Machine Learning.Amherst:Morgan Kaufmann Publishers Inc,1993:330-337. |

| 21 | JIAO Y, TANG X C, QIN Z W,et al .Real-world ride-hailing vehicle repositioning using deep reinforcement learning[J].Transportation Research Part C:Emerging Technologies,2021,130:103289. |

| 22 | YANG Y D, LUO R, LI M,et al .Mean field multi-agent reinforcement learning[C]∥Proceedings of the 35th International Conference on Machine Learning.Stockholm:JMLR,2018,80:5571-5580. |

| 23 | LI M, QIN Z W, JIAO Y,et al .Efficient ridesharing order dispatching with mean field multi-agent reinforcement learning[C]∥Proceeding of the World Wide Web Conference.New York:ACM,2019:983-994. |

| 24 | ZHU Z, KE J, WANG H,et al .A mean-field markov decision process model for spatial-temporal subsidies in ride-sourcing markets[J].Transportation Research Part B:Methodological,2021,150:540-565. |

| 25 | SHOU Z Y, DI X .Reward design for driver repositioning using multi-agent reinforcement learning[J].Transportation Research Part C:Emerging Technologies,2020,119:102738. |

| 26 | LOWE R, WU Y, TAMAR A,et al .Multi-agent actor-critic for mixed cooperative competitive environments[C]∥Proceedings of the 31st International Conference on Neural Information Processing Systems.New York:Curran Associates Inc,2017:6382-6393. |

| 27 | JIN J, ZHOU M, ZHANG W N,et al .Coride:joint order dispatching and fleet management for multi-scale ride-hailing platforms[C]∥Proceedings of the 28th ACM International Conference on Information and Knowledge Management.New York:ACM,2019:1983-1992. |

| 28 | XU Z, LI Z X, GUAN Q W,et al .Large-scale order dispatch in on-demand ride-hailing platforms:a learning and planning approach[C]∥Proceedings of the 24th ACM SIGKDD International Conference on Knowledge Discovery and Data Mining.New York:ACM,2018:905-913. |

| 29 | DAI H J, KHAKIK E, ZAHNG Y Y,et al .Learning combinatorial optimization algorithms over graphs[C]∥Proceedings of the 31st International Conference on Neural Information Processing Systems.New York:ACM,2017:6351-6361. |

| 30 | 闫军威,黄琪,周璇 .基于Double-DQN的中央空调系统节能优化运行[J].华南理工大学学报(自然科学版),2019,47(1):135-144. |

| YAN Junwei, HUANG Qi, ZHOU Xuan .Energy-saving optimization operation of central air-conditioning system based on double-DQN algorithm[J].Journal of South China University of Technology (Natural Science Edition),2019,47(1):135-144. | |

| 31 | BELLO I, PHAM H, LE Q,et al .Neural combinatorial optimization with reinforcement learning[J].Learning,2016,18:487-494. |

| 32 | YAN C W, ZHU H L, KOROLKO N,et al .Dynamic pricing and matching in ride-hailing platforms[J].Naval Research Logistics(NRL),2020,67(8):705-724. |

| 33 | ZHOU J, CUI G Q, HU S D,et al .Graph neural networks:a review of methods and applications[J].AI Open,2020,1:57-81. |

| 34 | WANG Y, SUN Y B, LIU Z W,et al .Dynamic graph CNN for learning on point clouds[J].ACM Transactions on Graphics,2019,38(5):1-12. |

| 35 | TOMAR M, EFRONI Y, GHAVAMZADEH M .Multi-step greedy reinforcement learning algorithms[C]∥Proceedings of the 37th International Conference on Machine Learning.Vienna:PMLR,2020:9504-9513. |

| 36 | YE D H, LIU Z, SUN M F,et al .Mastering complex control in MOBA games with deep reinforcement learning[C]∥Proceedings of the AAAI Conference on Artificial Intelligence.Menlo Park:AAAI,2020:6672-6667. |

/

| 〈 |

|

〉 |