收稿日期: 2022-12-24

网络出版日期: 2023-04-20

基金资助

广东省重点领域研发计划项目(2021B0101420002);教育部产学合作协同育人项目(201902186007);广州市重点领域研发计划项目(202007040002);广州市开发区国际合作项目(2020GH10)

AdfNet: An Adaptive Deep Forgery Detection Network Based on Diverse Features

Received date: 2022-12-24

Online published: 2023-04-20

Supported by

Guangdong Provincial Key R&D Program(2021B0101420002);the Ministry of Education’s Cooperative Education Project(201902186007)

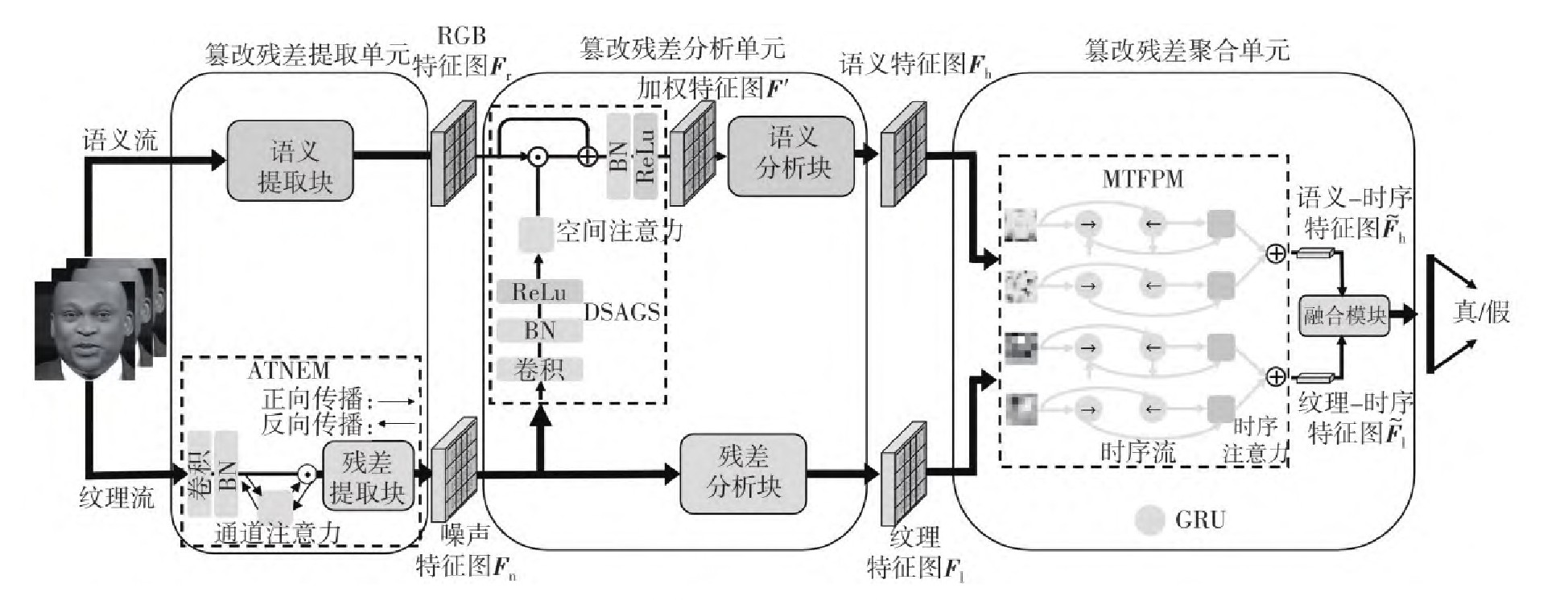

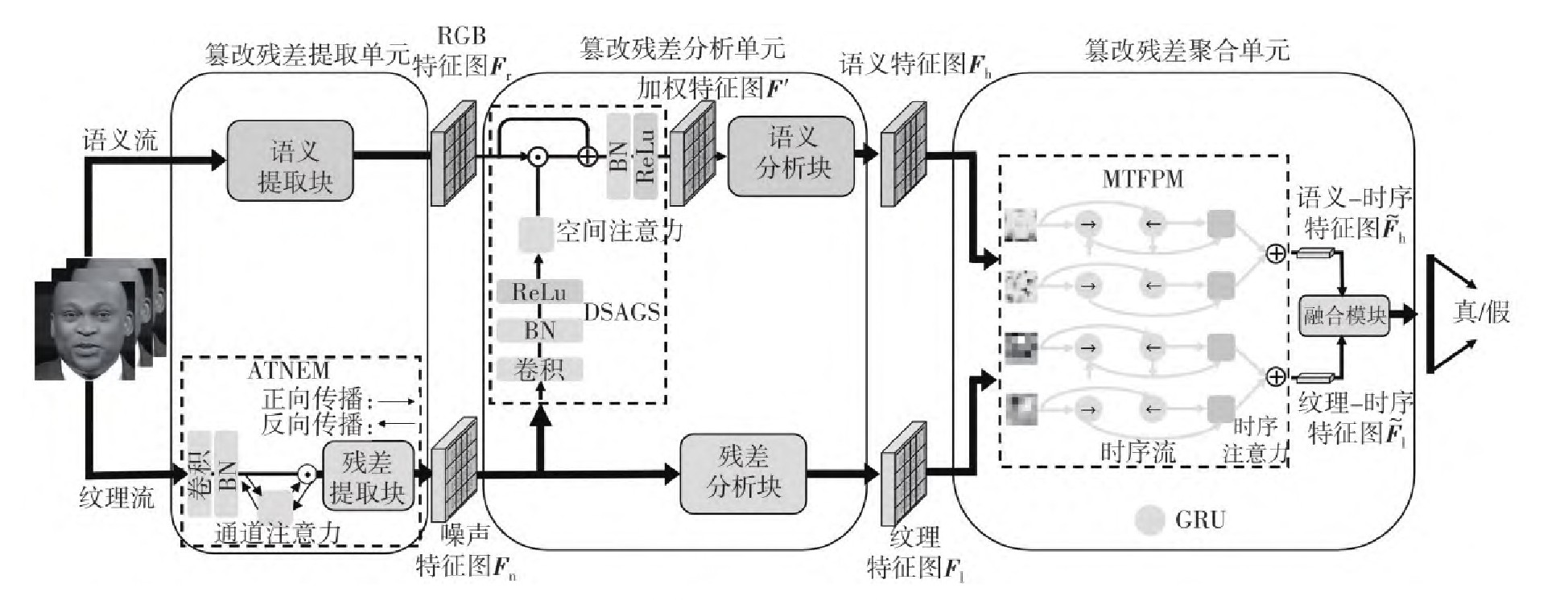

视频篡改造成的危害一直在危及人们的生活,这使深度伪造检测技术逐渐得到广泛关注和发展。然而,目前的检测方法由于使用了不灵活的约束条件,无法有效捕获噪声残差;此外,也忽略了纹理和语义特征之间的关联,以及时序特征对检测性能提升的影响。为了解决上述问题,文中提出了一种用于深度伪造检测的、具有多样化特征的自适应网络(AdfNet),它通过提取语义特征、纹理特征和时序特征帮助分类器判断真伪;探索了自适应纹理噪声提取机制(ATNEM),通过未池化的特征映射与基于频域的通道注意力机制,灵活捕获非固定频段的噪声残差;设计了深层语义分析指导策略(DSAGS),通过空间注意力机制突出篡改痕迹,并引导特征提取器关注焦点区域的深层特征;研究了多尺度时序特征处理方法(MTFPM),利用时序注意力机制给不同视频帧分配权重,捕获被篡改视频中时间序列的差异。实验结果表明,所提出的网络在FaceForensics++(FF++)数据集HQ模式中的ACC值为97.41%,相比当前主流网络有较为明显的性能提升;并且在FF++数据集上保持AUC值为99.80%的同时,在Celeb-DF上AUC值可达到76.41%,具有较强的泛化性。

李家春, 李博文, 林伟伟 . AdfNet: 一种基于多样化特征的自适应深度伪造检测网络[J]. 华南理工大学学报(自然科学版), 2023 , 51(9) : 82 -89 . DOI: 10.12141/j.issn.1000-565X.220825

The harm caused by video tampering has been endangering people’s lives, which makes deep forgery detection technology gradually obtain widespread attention and development. However, current detection methods could not effectively capture noisy residuals due to the use of inflexible constraints. In addition, they ignore the correlation between texture and semantic features and the impact of temporal features on detection performance improvement. To solve these problems, this paper proposed an adaptive network (AdfNet) with diverse features for deep forgery detection. It helps the classifier to judge authenticity by extracting semantic features, texture features and temporal features. The paper explored the adaptive texture noise extraction (ATNEM) mechanism, and flexibly captured the noise residuals in non-fixed frequency bands through unpooled feature mapping and frequency-based channel attention mechanism. The deep semantic analysis guidance strategy (DSAGS) was designed to highlight the tampering traces through spatial attention mechanism, and guide the feature extractor to focus on the deep features of the focus region. The paper studied multi-scale temporal feature processing (MTFPM), and used temporal attention mechanism to assign weights to different video frames and capture the difference of time series in tampered videos. The experimental results show that the ACC score of the proposed network in the HQ mode of FaceForensics++(FF++) dataset is 97.41%, which is significantly better than that of the existing mainstream algorithms. Moreover, while maintaining the AUC value of 99.80% on the FF++ dataset, the AUC value can reach 76.41% on Celeb-DF, reflecting strong generalization.

| 1 | KORSHUNOV P, MARCEL S .Deepfakes:a new threat to face recognition?assessment and detection[EB/OL].(2018-12-20)[2022-09-01].. |

| 2 | 胡永健, SALMAN Alhamidi,王宇飞,等 .视频篡改检测数据库的构建及测试[J].华南理工大学学报(自然科学版),2017,45(12):57-64. |

| HU Yong-jian, SALMAN Alhamidi, WANG Yu-fei,et al .Construction and evaluation of video forgery detection database[J].Journal of South China University of Technology (Natural Science Edition),2017,45(12):57-64. | |

| 3 | WANG J, SUN Y, TANG J .LISIAM:Localization invariance Siamese network for deepfake detection[J].IEEE Transactions on Information Forensics and Security,2022,17:2425-2436. |

| 4 | 陆璐,钟文煜,吴小坤 .基于多尺度视觉Transformer的图像篡改定位[J].华南理工大学学报(自然科学版),2022,50(6):10-18. |

| LU Lu, ZHONG Wenyu, WU Xiaokun .Image tampering localization based on mutil-scale transformer[J].Journal of South China University of Technology(Natural Science Edition),2022,50(6):10-18. | |

| 5 | YANG J, XIAO S, LI A,et al .Detecting fake images by identifying potential texture difference[J].Future Generation Computer Systems,2021,125:127-135. |

| 6 | COZZOLINO D, POGGI G, VERDOLIVA L .Recasting residual-based local descriptors as convolutional neural networks:An application to image forgery detection[C]∥Proceedings of the 5th ACM Workshop on Information Hiding and Multimedia Security.Philadelphia:ACM,2017:159-164. |

| 7 | BAYAR B, STAMM M C .A deep learning approach to universal image manipulation detection using a new convolutional layer[C]∥Proceedings of the 4th ACM Workshop on Information Hiding and Multimedia Security.Vigo:ACM,2016:5-10. |

| 8 | AFCHAR D, NOZICK V, YAMAGISHI J,et al .Mesonet:A compact facial video forgery detection network[C]∥Proceedings of the 2018 IEEE International Workshop on Information Forensics and Security (WIFS).Hong Kong:IEEE,2018:1-7. |

| 9 | CHOLLET F .Xception:Deep learning with depthwise separable convolutions[C]∥Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition. Honolulu:IEEE,2017:1251-1258. |

| 10 | MASI I, KILLEKAR A, MASCARENHAS R M,et al .Two-branch recurrent network for isolating deepfakes in videos[C]∥Proceedings of the European Conference on Computer Vision.Glasgow:Springer,2020:667-684. |

| 11 | ZHAO H, ZHOU W, CHEN D,et al .Multi-attentional deepfake detection[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.Kuala Lumpur:IEEE,2021:2185-2194. |

| 12 | WU X, XIE Z, GAO Y T,et al .Sstnet:Detecting manipulated faces through spatial,steganalysis and temporal features[C]∥Proceedings of the 2020 IEEE International Conference on Acoustics,Speech and Signal Processing (ICASSP).Barcelona:IEEE,2020:2952-2956. |

| 13 | GüERA D, DELP E J .Deepfake video detection using recurrent neural networks[C]∥Proceedings of the 2018 15th IEEE International Conference on Advanced Video and Signal Based Surveillance (AVSS).Auckland:IEEE,2018:1-6. |

| 14 | SABIR E, CHENG J, JAISWAL A,et al .Recurrent convolutional strategies for face manipulation detection in videos[J].Interfaces (GUI),2019,3(1):80-87. |

| 15 | ROSSLER A, COZZOLINO D, VERDOLIVA L,et al .FaceForensics++:Learning to detect manipulated facial images[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision.Calif:IEEE,2019:1-11. |

| 16 | LI Y, YANG X, SUN P,et al .Celeb-DF:A large-scale challenging dataset for deepfake forensics[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE,2020:3207-3216. |

| 17 | DENG J, DONG W, SOCHER R,et al .ImageNet:A large-scale hierarchical image database[C]∥Proceedings of the 2009 IEEE Conference on Computer Vision and Pattern Recognition.Miami:IEEE,2009:248-255. |

| 18 | BOROUMAND M, CHEN M, FRIDRICH J .Deep residual network for steganalysis of digital images[J].IEEE Transactions on Information Forensics and Security,2018,14(5):1181-1193. |

| 19 | QIN Z, ZHANG P, WU F,et al .Fcanet:Frequency channel attention networks[C]∥Proceedings of the IEEE/CVF International Conference on Computer Vision.Montreal:IEEE,2021:783-792. |

| 20 | LIU H, LI X, ZHOU W,et al .Spatial-phase shallow learning:Rethinking face forgery detection in frequency domain[C]∥Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition.Kuala Lumpur:IEEE,2021:772-781. |

| 21 | QIAN Y, YIN G, SHENG L,et al .Thinking in frequency:Face forgery detection by mining frequency-aware clues[C]∥Proceedings of the European Conference on Computer Vision.Glasgow:Springer,2020:86-103. |

| 22 | TAN M, LE Q .Efficientnet:Rethinking model scaling for convolutional neural networks[C]∥Proceedings of the International Conference on Machine Learning. New York:PMLR,2019:6105-6114. |

| 23 | TRINH L, TSANG M, RAMBHATLA S,et al .Interpretable and trustworthy deepfake detection via dynamic prototypes[C]∥Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision.Montreal:IEEE,2021:1973-1983. |

| 24 | NGUYEN H H, FANG F, YAMAGISHI J,et al .Multi-task learning for detecting and segmenting manipulated facial images and videos[C]∥Proceedings of the 2019 IEEE 10th International Conference on Biometrics Theory,Applications and Systems (BTAS).Tampa:IEEE,2019:1-8. |

| 25 | CHO K, VAN M B, GULCEHRE C,et al .Learning phrase representations using RNN encoder-decoder for statistical machine translation[EB/OL].(2014-09-03)[2022-09-01].. |

| 26 | LIN T Y, ROYCHOWDHURY A, MAJI S .Bilinear CNN models for fine-grained visual recognition[C]∥Proceedings of the IEEE International Conference on Computer Vision.Santiago:IEEE,2015:1449-1457. |

| 27 | DAI Y, GIESEKE F, OEHMCKE S,et al .Attentional feature fusion[C]∥Proceedings of the IEEE/CVF Winter Conference on Applications of Computer Vision.Montreal:IEEE,2021:3560-3569. |

/

| 〈 |

|

〉 |