收稿日期: 2022-03-15

网络出版日期: 2022-05-04

基金资助

国家自然科学基金资助项目(51778242)

Road Markings Condition Assessment Method for Intelligent Vehicles

Received date: 2022-03-15

Online published: 2022-05-04

Supported by

the National Natural Science Foundation of China(51778242)

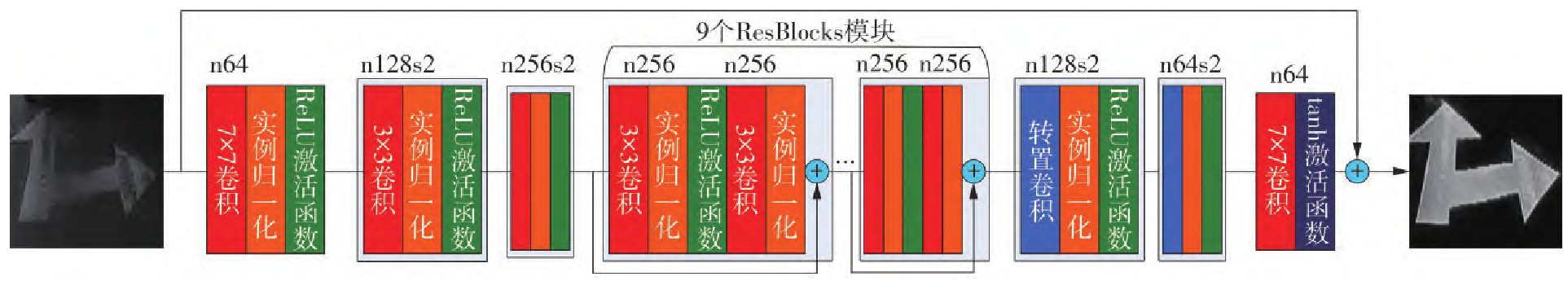

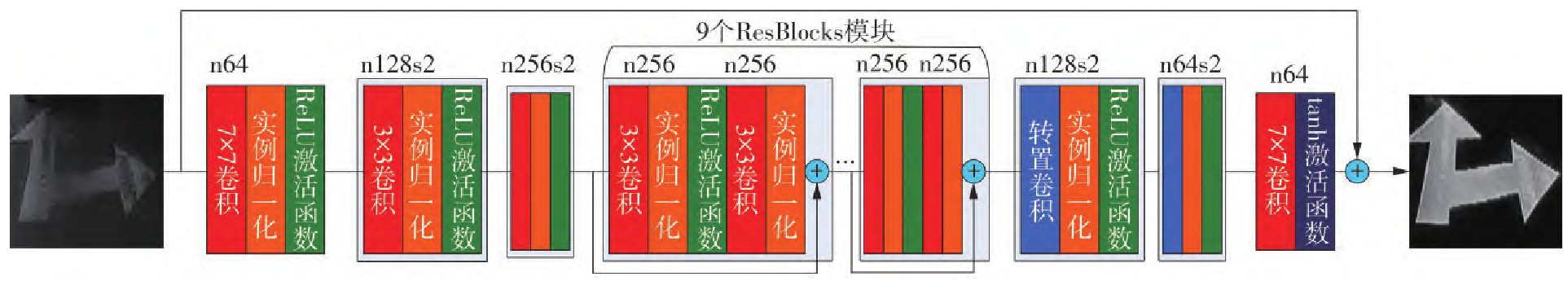

随着自动驾驶技术的不断进步和普及,道路上将会出现越来越多具有自动驾驶技术的汽车,交通标线的服务对象将逐渐从驾驶员向自动驾驶汽车过渡。现有的交通标线使用状况评估方法不但需要耗费大量的人力去巡查、测量和评估,而且评估指标也是基于生物视觉研究而得,不符合基于机器视觉的自动驾驶汽车的特点;针对上述的问题,文中提出了一种面向自动驾驶汽车的交通标线使用状况评估方法。首先,基于自动驾驶汽车的特性,运用查阅文献、类比推理和逻辑推理等方法初步确定峰值信噪比(PSNR)作为评估指标;其次,为了快速且准确地获取峰值信噪比,提出了基于图像修复的峰值信噪比的计算方法,该方法先利用基于条件生成对抗网络的DeblurGAN模型在图像层面复原破损的交通标线,进而利用破损和复原后的交通标线图像计算出峰值信噪比,同时,文中提出了一种可以真实地合成破损的交通标线图像的数据增强方法去提高图像修复模型的性能。然后,以AlexNet网络为基准模型设计对照实验去研究峰值信噪比与交通标线的识别准确率的关系;最后,将研究成果应用到实际的交通标线使用状况的评估工作中,并与现行规范的评估方法比较。实验结果表明:与基于人工修复图像的峰值信噪比的计算方法对比,文中所提的方法得到的平均峰值信噪比只相差约2.24%,但获取速度却提高了约418倍;峰值信噪比影响交通标线的识别准确率,当平均PSNR相差约43.66%时,平均识别准确率相差了约36.27%,峰值信噪比可以衡量交通标线的使用状况;文中所提出的评估方法使工作效率约提高了6.5倍且耗费更少的人力,也更符合自动驾驶汽车的特点,但规范中的评估方法更加详尽。

符锌砂, 彭锦辉, 曾彦杰, 等 . 面向自动驾驶汽车的交通标线使用状况评估方法[J]. 华南理工大学学报(自然科学版), 2022 , 50(11) : 1 -13 . DOI: 10.12141/j.issn.1000-565X.220126

With the continuous advancement and popularization of autonomous driving technology, more and more vehicles with autonomous driving technology will appear on the road, and the service objects of road markings will gradually transition from drivers to autonomous vehicles.On the one hand, the method of road markings condition assessment requires a lot of manpower to inspect, measure and evaluate; on the other hand, the evaluation index is based on biological vision research, which does not conform to the characteristics of automatic driving vehicles based on machine vision. To solve the above problems, this paper proposed a method of road markings condition assessment for autonomous vehicles. First, PSNR(peak signal-to-noise ratio) was initially determined as the evaluation index by means of literature review, analogical reasoning and logical reasoning. Secondly, to quickly obtain PSNR, this paper proposes a calculation method of the PSNR based on image inpainting, which utilizes the DeblurGAN model restores the damaged road markings at the image level, and then uses the damaged and restored road markings images to calculate the PSNR. In addition, this paper proposed a data augmentation method that can realis-tically synthesize damaged road markings images to improve the performance of image inpainting models. Then, the AlexNet network was used as the benchmark model to design experiments to study the relationship between the PSNR and the recognition accuracy of road markings. The experimental results show that, compared with the calculation method of the PSNR based on the artificially restored image, the average PSNR obtained by the method proposed in this paper only differs by about 2.24%, but the acquisition speed is increased by about 418 times; when the average PSNR differs by about 43.66%, the average recognition accuracy differs by about 36.27%. Therefore, the PSNR can measure the use of road markings. Compared with the evaluation method of the current standard, the evaluation method proposed in this paper improves the work efficiency by about 6.5 times and consumes less manpower. And it is more in line with the characteristics of self-driving cars, but the evaluation methods are more detailed in the specification.

| 1 | 道路交通标志和标线: [S]. |

| 2 | 道路交通标线质量要求和检测方法: [S]. |

| 3 | Manual on uniform traffic control devices [Z].Washington DC:Department of Transportation FHA,2009. |

| 4 | YE F, CARLSON P J, MILES J D .Analysis of nighttime visibility of in-service pavement markings[J].Transportation Research Record:Journal of the Transportation Research Board,2013,2384(1):85-94. |

| 5 | XU S, WANG J, WU P,et al .Vision-based pavement marking detection and condition assessment:a case study[J].Applied Sciences,2021,11(7):3152. |

| 6 | VOKHIDOV H, HONG H, KANG J,et al .Recognition of damaged arrow-road markings by visible light camera sensor based on convolutional neural network[J].Sensors,2016,16(12):2160. |

| 7 | KAWANO M, MIKAMI K, YOKOYAMA S,et al .Road marking blur detection with drive recorder[C]∥Proceedings of the 2017 IEEE International Conference on Big Data (IEEE Big Data).Boston:IEEE,2017:4092-4097. |

| 8 | HIROYA M, YOSHIHIDE S, TOSHIKAZU S,et al .Road damage detection and classification using deep neural networks with smartphone images[J].Computer‐Aided Civil and Infrastructure Engineering,2018,33(12):1127-1141. |

| 9 | 雷蕴奇,柳秀霞,宋晓冰,等 .视频中运动人脸的检测与特征定位方法[J].华南理工大学学报(自然科学版),2009,37(5):31-37. |

| 9 | LEI Yun-qi, LIU Xiu-xia, SONG Xiao-bing,et al .Face detection and feature location of moving men in video[J].Journal of South China University of Technology (Natural Science Edition),2009,37(5):31-37. |

| 10 | 刘富,刘璐,侯涛,等 .基于优化MSR的夜间道路图像增强方法[J].吉林大学学报(工学版),2021,51(1):323-330. |

| 10 | LIU Fu, LIU Lu, HOU Tao,et al .Night road image enhancement method based on optimized MSR[J].Journal of Jilin University(Engineering and Technology Edition),2021,51(1):323-330. |

| 11 | 蒋渊德,孙朋朋,秦孔建,等 .雨雪天气对自动驾驶视觉图像质量的影响[J].中国公路学报,2022,35(3):307-316. |

| 11 | JIANG Yuan-de, SUN Peng-peng, QIN Kong-jian,et al .Influence of rain and snow on autonomous vehicles’ camera[J].China Journal of High-way and Transport,2022,35(3):307-316. |

| 12 | 孙季丰,朱雅婷,王恺 .基于DeblurGAN和低秩分解的去运动模糊[J].华南理工大学学报(自然科学版),2020,48(1):32-50. |

| 12 | SUN Jifeng, ZHU Yating, WANG Kai,et al . Motion deblurring based on DeblurGAN and low rank decomposition[J].Journal of South China University of Technology (Natural Science Edition),2020,48(1):32-50. |

| 13 | IIZUKA S, SIMO-SERRA E, ISHIKAWA H .Globally and locally consistent image completion[J].ACM Transactions on Graphics,2017,36(4):107/1-14. |

| 14 | QUAN W, ZHANG R, ZHANG Y,et al .Image inpainting with local and global refinement[J].IEEE Transactions on Image Processing,2022,31:2405-2420. |

| 15 | KUPYN O, BUDZAN V, MYKHAILYCH M,et al .DeblurGAN:blind motion deblurring using conditional adversarial networks[C]∥Proceedings of the 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR).Salt Lake City:IEEE,2018:8183-8192. |

| 16 | ISOLA P, ZHU J, ZHOU T,et al .Image-to-image translation with conditional adversarial networks[C]∥Proceedings of the 30th IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2017).Hawaii:lEEE,2017:5967-5976. |

| 17 | GULRAJANI I, AHMED F, ARJOVSKY M,et al .Improved training of wasserstein GANs[C] ∥Proceedings of the 31st Advances in Neural Information Processing Systems (NIPS 2017).California:NIPS,2017:5767-5777. |

| 18 | JOHNSON J, ALAHI A, FEI-FEI L .Perceptual losses for real-time style transfer and super-resolution[C]∥Proceedings of the European Conference on Computer Vision.Amsterdam:Springer,2016:694-711. |

| 19 | KRIZHEVSKY A, SUTSKEVER I, HINTON G E .ImageNet classification with deep convolutional neural networks[J].Communications of the ACM.2017,60(6):84-90. |

| 20 | LECUN Y, BOTTOU L, BENGIO Y,et al .Gradient-based learning applied to document recognition[J].Proceedings of the IEEE,1998,86(11):2278-2324. |

| 21 | SIMONYAN K, ZISSERMAN A .Very deep convolutional networks for large-scale image recognition[EB/OL].arXiv preprint arXiv:. |

| 22 | SZEGEDY C, LIU W, JIA Y,et al .Going deeper with convolutions[C]∥Proceedings of the IEEE Conference on computer vision and pattern recognition.Boston,MA:2015:1-9. |

| 23 | HUANG G, LIU Z, VAN DER MAATEN L,et al .Densely connected convolutional networks[C]∥Proceedings of the 30th IEEE Conference on Computer Vision and Pattern Recognition (CVPR 2017).Hawaii:IEEE,2017:2261-2269. |

/

| 〈 |

|

〉 |