收稿日期: 2022-02-11

网络出版日期: 2022-04-07

基金资助

广东省海洋经济发展专项(GDNRC[2020]018);广东省重点领域研发计划项目(2019B020214001);广州市产业技术重大攻关计划项目(2019-01-01-12-1006-0001);华南理工大学中央高校基本科研业务费专项资金资助项目(2018KZ05);华南理工大学研究生教育改革项目(zysk2018005)

Two-Stream Adaptive Attention Graph Convolutional Networks for Action Recognition

Received date: 2022-02-11

Online published: 2022-04-07

Supported by

the Guangdong Provincial Special Project for the Development of Ocean Economy(GDNRC[2020]018);the Key-Area R&D Project of Guangdong Province(2019B020214001)

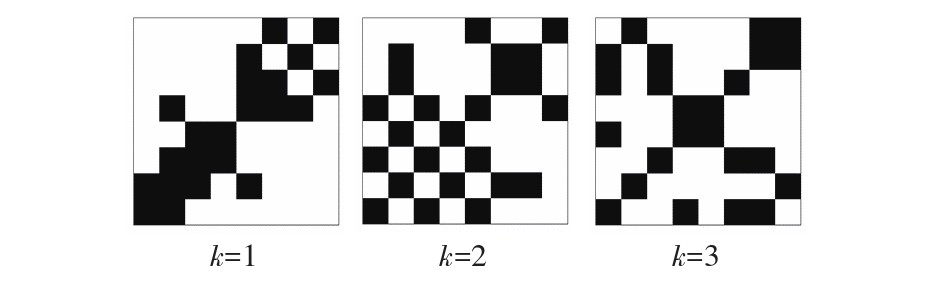

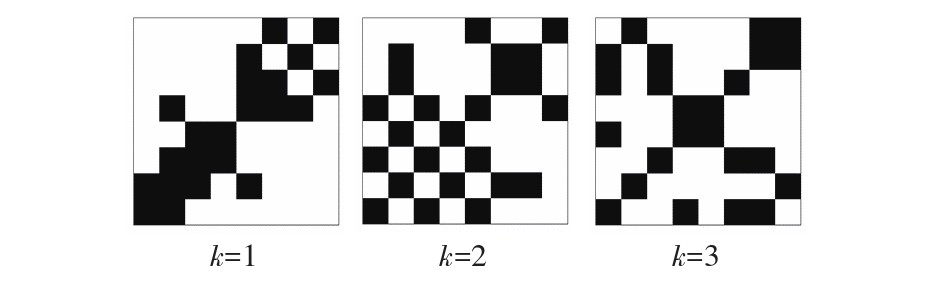

人体动作识别因在公共安全方面具有重要的作用而在计算机视觉领域备受关注。然而,现有的图卷积网络在融合多尺度节点的邻域特征时,通常采用各阶邻接矩阵直接相加的方法,各项重要性一致,难以聚焦于重要特征,不利于最优节点关系的建立,同时采用对不同模型的预测结果求平均的双流融合方法,忽略了潜在数据的分布差异,融合效果欠佳。为此,文中提出了一种双流自适应注意力图卷积网络,用于对人体动作进行识别。首先,设计了能自适应平衡权重的多阶邻接矩阵,使模型聚焦于更加重要的邻域;然后,设计了多尺度的时空自注意力模块及通道注意力模块,以增强模型的特征提取能力;最后,提出了一种双流融合网络,利用双流预测结果的数据分布来决定融合系数,提高融合效果。该算法在NTU RGB+D的跨主体和跨视角两个子数据集上的识别准确率分别达92.3%和97.5%,在Kinetics-Skeleton数据集上的识别准确率达39.8%,均高于已有算法,表明了文中算法对于人体动作识别的优越性。

杜启亮, 向照夷, 田联房, 等 . 用于动作识别的双流自适应注意力图卷积网络[J]. 华南理工大学学报(自然科学版), 2022 , 50(12) : 20 -29 . DOI: 10.12141/j.issn.1000-565X.220055

Human action recognition has received much attention in the field of computer vision because of its important role in public safety. However, when fusing the neighborhood features of multi-scale nodes, existing graph convolutional networks usually adopt a direct summation method, in which the same importance is attached to each feature, so it is difficult to focus on important features and is not conducive to the establishment of optimal nodal relationships. In addition, the two-stream fusion method, which averages the prediction results of different models, ignores the potential data distribution differences and the fusion effect is not good. To this end, this paper proposed a two-stream adaptive attention graph convolutional network for human action recognition. Firstly, a multi-order adjacency matrix that adaptively balances the weights was designed to focus the model on more important domains. Secondly, a multi-scale spatio-temporal self-attention module and a channel attention module were designed to enhance the feature extraction capability of the model. Finally, a two-stream fusion network was proposed to improve the fusion effect by using the data distribution of the two-stream prediction results to determine the fusion coefficients. On the two subdatasets of cross subject and cross view of NTU RGB+D, the recognition accuracy of the algorithm is 92.3% and 97.5%, respectively; while on the Kinetics-Skeleton dataset, it reaches 39.8%, both of which are higher than the existing algorithms, indicating the superiority of the algorithm in human motion recognition.

| 1 | 朱煜,赵江坤,王逸宁,等 .基于深度学习的人体行为识别算法综述 [J].自动化学报,2016,42(6):848-857. |

| 1 | ZHU Yu, ZHAO Jiangkun, WANG Yining,et al .A review of human action recognition based on deep learning [J].Acta Automatica Sinica,2016,42(6):848-857. |

| 2 | VEMULAPALLI R, ARRATE F, CHELLAPPA R .Human action recognition by representing 3D skeletons as points in a lie group [C]∥ Proceedings of 2014 IEEE Conference on Computer Vision and Pattern Recognition.Columbus:IEEE,2014:588-595. |

| 3 | FERNANDO B, GAVVES E, ORAMAS J M,et al .Modeling video evolution for action recognition [C]∥ Proceedings of 2015 IEEE Conference on Computer Vision and Pattern Recognition.Boston:IEEE,2015:5378-5387. |

| 4 | RUMELHART D E, HINTON G E, WILLIAMS R J .Learning representations by back-propagating errors [J].Nature,1986,323(6088):533-536. |

| 5 | YANN L, BOTTOU L, BENGIO Y,et al .Gradient-based learning applied to document recognition [J].Proceedings of the IEEE,1998,86(11):2278-2324. |

| 6 | SHAHROUDY A, LIU J, T-T NG,et al .NTU RGB+D:a large scale dataset for 3D human activity analysis [C]∥ Proceedings of 2016 IEEE Conference on Computer Vision and Pattern Recognition.Las Vegas:IEEE,2016:1010-1019. |

| 7 | LIU J, SHAHROUDY A, XU D,et al .Spatio-temporal LSTM with trust gates for 3D human action recognition [C]∥ Proceedings of the 14th European Conference on Computer Vision.Amsterdam:Springer,2016:816-833. |

| 8 | ZHANG P, LAN C, XING J,et al .View adaptive recu-rrent neural networks for high performance human action recognition from skeleton data [C]∥ Proceedings of 2017 IEEE International Conference on Computer Vision.Venice:IEEE,2017:2117-2126. |

| 9 | LI S, LI W, COOK C,et al .Independently recurrent neural network (IndRNN):building a longer and deeper RNN [C]∥ Proceedings of 2018 IEEE Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:5457-5466. |

| 10 | KE Q, BENNAMOUN M, AN S,et al .A new representation of skeleton sequences for 3D action recognition [C]∥ Proceedings of 2017 IEEE Conference on Computer Vision and Pattern Recognition.Honolulu:IEEE,2017:3288-3297. |

| 11 | LI C, ZHONG Q, XIE D,et al .Skeleton-based action recognition with convolutional neural networks [C]∥ Proceedings of 2017 IEEE International Conference on Multimedia Expo Workshops.Hong Kong:IEEE,2017:597-600. |

| 12 | LI B, DAI Y, CHENG X,et al .Skeleton based action recognition using translation-scale invariant image mapping and multi-scale deep CNN [C]∥ Proceedings of 2017 IEEE International Conference on Multimedia Expo Workshops.Hong Kong:IEEE,2017:601-604. |

| 13 | XU K, YE F, ZHONG Q,et al .Topology-aware convolutional neural network for efficient skeleton-based action recognition [EB/OL].(2021-12-09) [2022-02-11].. |

| 14 | WU Z, PAN S, CHEN F,et al .A comprehensive survey on graph neural networks [J].IEEE Transactions on Neural Networks and Learning Systems,2021,32(1):4-24. |

| 15 | 杜启亮,黄理广,田联房,等 .基于视频监控的手扶电梯乘客异常行为识别 [J].华南理工大学学报(自然科学版),2020,48(8):10-21. |

| 15 | DU Qiliang, HUANG Liguang, TIAN Lianfang,et al .Recognition of passengers’ abnormal behavior on escalator based on video monitoring [J].Journal of South China University of Technology (Natural Science Edition),2020,48(8):10-21. |

| 16 | YAN S, XIONG Y, LIN D .Spatial temporal graph convolutional networks for skeleton-based action recognition [C]∥ Proceedings of the 32nd AAAI Conference on Artificial Intelligence.Palo Alto:AAAI,2018:7444-7452. |

| 17 | SHI L, ZHANG Y, CHENG J,et al .Two-stream adaptive graph convolutional networks for skeleton-based action recognition [C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:12018- 12027. |

| 18 | LI B, LI X, ZHANG Z,et al .Spatio-temporal graph routing for skeleton-based action recognition [C]∥ Proceedings of the 33rd AAAI Conference on Artificial Intelligence.Hawaii:AAAI,2019:8561-8568. |

| 19 | LI M, CHEN S, CHEN X,et al .Actional-structural graph convolutional networks for skeleton-based action recognition [C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:3595-3603. |

| 20 | WEI J, WANG Y, GUO M,et al .Dynamic hypergraph convolutional networks for skeleton-based action recognition [EB/OL].(2021-10-20)[2022-02-11].. |

| 21 | MIAO S, HOU Y, GAO Z,et al .A central difference graph convolutional operator for skeleton-based action recognition [J].IEEE Transactions on Circuits and Systems for Video Technology,2022,32(7):4893-4899. |

| 22 | LIU Z, ZHANG H, CHEN Z,et al .Disentangling and unifying graph convolutions for skeleton-based action recognition [C]∥ Proceedings of 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Seattle:IEEE,2020:143-152. |

| 23 | VASWANI A, SHAZEER N, PARMAR N,et al .Attention is all you need [C]∥ Proceedings of the 31st Advances in Neural Information Processing Systems.Long Beach:Curran Associates,2017:5998-6008. |

| 24 | LAN Z, CHEN M, GOODMAN S,et al .ALBERT:a lite BERT for self-supervised learning of language representations [EB/OL].(2019-09-26)[2022-02-11].. |

| 25 | LIU Z, LIN Y, CAO Y,et al .Swin transformer:hierar-chical vision transformer using shifted windows [EB/OL].(2021-08-21)[2022-02-11].. |

| 26 | KAY W, CARREIRA J, SIMONYAN K,et al .The kinetics human action video dataset [EB/OL].(2021-05-19)[2022-02-11].. |

| 27 | CAO Z, HIDALGO G, SIMON T,et al .OpenPose:realtime multi-person 2D pose estimation using part affi-nity fields [J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2021,43(1):172-186. |

| 28 | SHI L, ZHANG Y, CHENG J,et al .Skeleton-based action recognition with directed graph neural networks [C]∥ Proceedings of 2019 IEEE/CVF Conference on Computer Vision and Pattern Recognition.Long Beach:IEEE,2019:7912-7921. |

| 29 | TANG Y, TIAN Y, LU J,et al .Deep progressive reinforcement learning for skeleton-based action recognition [C]∥ Proceedings of 2018 IEEE Conference on Computer Vision and Pattern Recognition.Salt Lake City:IEEE,2018:5323-5332. |

| 30 | LI M, CHEN S, CHEN X,et al .Symbiotic graph neural networks for 3D skeleton-based human action recog-nition and motion prediction [J].IEEE Transactions on Pattern Analysis and Machine Intelligence,2022,44(6):3316-3333. |

| 31 | PENG W, HONG X, CHEN H,et al .Learning graph convolutional network for skeleton-based human action recognition by neural searching [C]∥ Procee-dings of the 34th AAAI Conference on Artificial Intelligence.New York:AAAI,2020:2669-2676. |

/

| 〈 |

|

〉 |