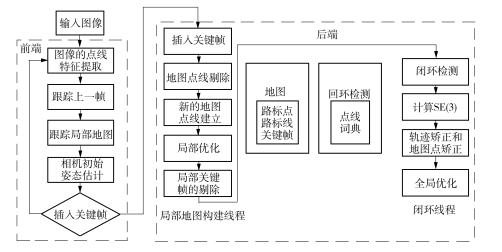

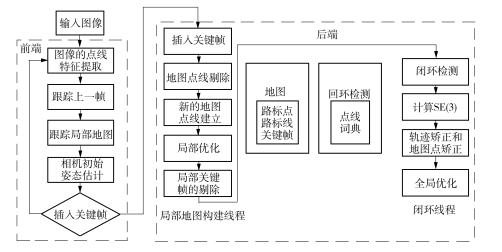

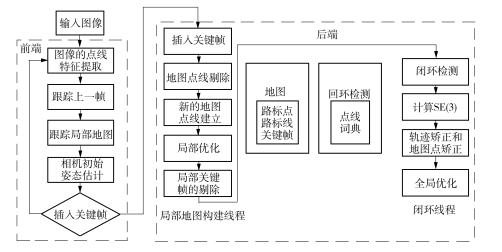

针对大场景、弱纹理环境下ORB-SLAM算法特征点采集困难和精度低的问题,提出一种基于RGB-D相机的点线特征融合SLAM算法——PAL-SLAM。在ORB-SLAM算法基础上设计点线特征融合新框架,通过研究点特征与线特征的融合原理来推导点线融合的重投影误差模型,进而得到该模型的雅可比矩阵解析形式,以此为基础提出PAL-SLAM算法的框架。利用TUM数据集对PAL-SLAM和ORB-SLAM算法进行对比实验,结果表明PAL-SLAM算法在室内大场景中的定位精度更高,标准误差仅为ORB-SLAM算法的18.9%。PAL-SLAM算法降低了传统视觉SLAM在大场景、弱纹理环境中的定位误差,有效提升了系统的准确性。文中还搭建了基于Kinect V2的实验平台,实验结果表明,PAL-SLAM算法能与硬件平台较好结合。

In order to overcome the difficult acquisition of feature points and the low accuracy of ORB-SLAM algorithm in large-scene and weak-texture environment, a PAL-SLAM algorithm based on RGB-D camera is proposed. In this algorithm, first, on the basis of ORB-SLAM algorithm, a new framework of point-line feature fusion is designed. Next, by investigating the fusion principle of point feature and line feature, a re-projection error model of point-line fusion is deduced, and the Jacobian matrix analytical form of the model is obtained. Then, the PAL-SLAM algorithm framework of point-line feature fusion is proposed. Furthermore, a comparative experiment between PAL-SLAM algorithm and ORB-SLAM algorithm is carried out by using TUM data set. The results show that the positioning accuracy of PAL-SLAM algorithm is higher in large indoor scenes, and the standard error is far less than that of ORB-SLAM algorithm, which is only 18.9% of that of ORB-SLAM algorithm| and that PAL-SLAM algorithm reduces the positioning error of traditional visual SLAM algorithm in large-scene and weak-texture environment, and effectively improves the accuracy of the system. Moreover, the experimental results at a platform based on Kinect V2 show that the proposed PAL-SLAM algorithm can be well combined with the hardware platform.